How to Guarantee Code Quality From an Offshore Dev Team: The 6-Layer QA Process

Offshore teams do not write bad code because they are offshore. They write bad code because the quality process was never defined. This 6-layer QA framework fixes that before the first line is written.

Acquaint Softtech

The Real Cause of Offshore Code Quality Problems

The most common complaint about offshore development teams is code quality. The most common diagnosis is wrong.

Offshore teams do not write bad code because they are offshore. They write bad code because the quality process was never defined. The same developers who produce unreliable output under one client produce consistent, high-quality work under another. The variable is not the team. It is the system around them.

After 13 years and 1,300+ projects, we have seen both sides of this. We have taken on engagements from clients who described their previous offshore team as unprofessional and discovered developers who were skilled but operating without any defined quality standards, review process, or feedback loop. The same developers under a structured process delivered exactly what the next client needed. The problem was never the developer. It was the absence of the onboarding framework and quality system around them.

This article covers the 6-layer QA process we apply across every offshore engagement. Each layer is a specific, implementable system, not a principle. By the end of this article you will know exactly what to put in place before your next offshore engagement starts.

- CTOs managing offshore dev teams who are not confident in the consistency of what is being shipped

- Engineering leads who have experienced quality problems with offshore engagements and want a systematic fix

- COOs responsible for engineering delivery who need a framework to evaluate quality without reviewing every PR

- Founders preparing to hire their first offshore developer who want to build the quality process correctly from day one

Why This Is a Process Problem, Not a People Problem

Before the 6-layer framework, this point is worth establishing clearly because the wrong diagnosis produces the wrong solution.

Myth: Offshore developers are less skilled than in-house developers

Skill distribution across offshore markets mirrors skill distribution in Western markets. The concentration of senior Laravel talent in India is a function of scale: a larger developer population produces more senior talent in absolute terms, not a lower average. The difference in output quality almost always traces to process, not capability.

Myth: You need to hire more expensive developers to get better code

Rate correlation with quality is weak, especially in offshore markets. A $30/hour developer with a strong code review process, defined standards, and fast feedback loops consistently outperforms a $70/hour developer operating without those structures. The process multiplies the developer's capability. Without it, a senior developer produces inconsistent output just as a junior one does.

Myth: Timezone makes quality control impossible

Timezone gaps make synchronous quality control harder. They do not make quality control impossible. Async code review, automated CI/CD pipelines, and structured sprint review processes all operate independently of timezone overlap. The teams with the worst quality problems are usually not the ones with the largest timezone gaps. They are the ones without a written review process.

The Correct Diagnosis

Offshore code quality problems are almost always caused by one or more of these specific absences:

No written coding standards document that the developer has reviewed and acknowledged

No structured code review process with defined criteria and turnaround expectations

No automated testing requirement on new code before a PR can be merged

No CI/CD pipeline that enforces standards automatically on every push

No feedback loop between what ships and what the client experiences in production

No retrospective process that surfaces and resolves recurring quality patterns

These are exactly the 6 layers of the framework in the next section.

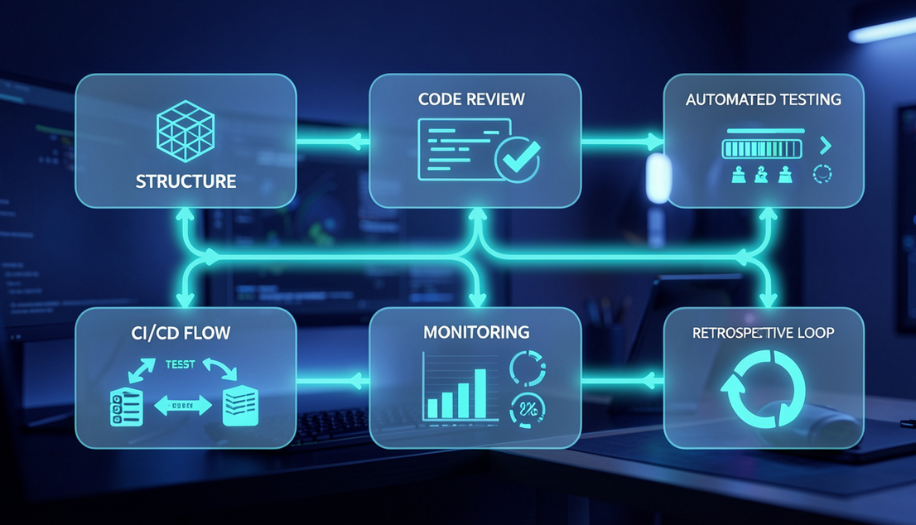

The 6-Layer QA Framework

Each layer below is a specific system with defined components. This is not a list of principles. It is a list of things to implement before the first sprint starts.

Layer 1 | Coding Standards and Architecture Agreements

Defined before Day 1. Not discussed during Sprint 1.

What it covers | A written coding standards document covering: naming conventions, file and folder structure, service class architecture patterns, Eloquent relationship standards, error handling conventions, comment and documentation requirements, and forbidden anti-patterns (logic in controllers, direct database queries outside Eloquent, hardcoded configuration values). |

How to implement | Send the standards document to the developer before Day 1 and require written acknowledgement. Include it in the repository as CONTRIBUTING.md. Review adherence to standards in every PR comment, not just obvious violations. Update the document when new patterns are established. This is a living document, not a one-time setup. |

What bad looks like | Standards document does not exist. Developer makes architecture decisions independently. Each new feature introduces a slightly different pattern. The codebase becomes inconsistent after Sprint 3. Refactoring cost multiplies with each sprint. Senior developers joining later spend weeks understanding inconsistent patterns. |

Layer 2 | Structured Code Review Process

Every PR reviewed before merge. No exceptions. No sprint pressure overrides this.

What it covers | A defined code review process covering: who reviews each PR, maximum turnaround time (24 hours during overlap window), review criteria checklist (standards adherence, test coverage, security considerations, performance implications, documentation), required approval count before merge, and a process for disputes between reviewer and developer. |

How to implement | Create a PR review checklist in GitHub or GitLab and require it to be completed on every PR. Assign a named primary reviewer for each developer. Track review turnaround time as a team metric. Never allow sprint pressure to skip the review step. A PR merged without review is a quality debt that always costs more to fix than the time saved. |

What bad looks like | PRs merged without review during busy sprint periods. Review is done by the developer themselves. Review comments are vague ('looks good' or 'needs work') without specifics. Review turnaround exceeds 48 hours. Developers learn nothing from the review process because feedback is inconsistent. |

Layer 3 | Automated Testing Requirements

Test coverage is a merge requirement, not a nice-to-have.

What it covers | A minimum test coverage requirement on all new code: unit tests for all service class methods, feature tests for all new API endpoints, and regression tests for all bug fixes. Define the coverage threshold (typically 70 to 80% for new code) and enforce it through the CI pipeline. Include test quality in the code review checklist. |

How to implement | Add PHPUnit and Laravel's testing utilities to the project from Sprint 1. Set a minimum coverage threshold in the CI configuration and fail the build if new code falls below it. Include test file review in the PR review process. Ensure tests are meaningful: a test that asserts true is true does not count. Test the behaviour, not the implementation. |

What bad looks like | No tests on new features because there is no requirement for them. Bug fixes shipped without regression tests. Test coverage drops sprint over sprint. Production incidents increase. The developer who introduced the bug has no mechanism to verify the fix did not break adjacent behaviour. |

Layer 4 | CI/CD Pipeline with Automated Quality Gates

The pipeline enforces quality automatically on every push. It is not optional and cannot be skipped.

What it covers | A CI/CD pipeline that runs on every push to any branch and enforces: static analysis (PHPStan or Larastan at a defined level), code style checks (PHP CS Fixer or Laravel Pint), automated test suite execution, minimum coverage threshold enforcement, and security vulnerability scanning on dependencies. The pipeline must pass before any PR can be merged. |

How to implement | Set up GitHub Actions or GitLab CI from Sprint 1. Configure the pipeline to block merges on failure. Do not allow exceptions. The pipeline is not a suggestion. A developer who cannot pass the pipeline has a quality problem that needs to be addressed, not bypassed. Every bypass sets a precedent that weakens the standard for every subsequent sprint. |

What bad looks like | CI pipeline exists but developers can bypass it. Pipeline runs only on the main branch, not on feature branches. Pipeline failures are treated as expected and worked around. Static analysis runs at level 0, which catches almost nothing. The pipeline is a checkbox, not a quality gate. |

Layer 5 | Production Monitoring and Error Tracking

Quality is not confirmed at merge. It is confirmed in production. You need to know immediately when something breaks.

What it covers | Production monitoring covering: error tracking (Sentry or Bugsnag with alerts for new error types), performance monitoring (response time baselines and alerts for degradation), uptime monitoring, and log aggregation for pattern analysis. Every production error is attributed to a specific commit and surfaced to the developer within the sprint. |

How to implement | Integrate Sentry or an equivalent tool from Sprint 1, before the first feature ships to production. Set up alert thresholds for new error types, error rate spikes, and performance degradation. Connect production alerts to the development Slack channel. Every alert is reviewed in the next standup. Production errors that go unreviewed become recurring costs. |

What bad looks like | No production error tracking. Bugs are discovered by users and reported via support tickets. The development team has no visibility into what is happening in production between releases. Performance degradation is undetected until it causes user-visible problems. The connection between code changes and production outcomes is invisible. |

Layer 6 | Sprint Retrospective Quality Review

Every sprint ends with a structured quality review. Patterns are surfaced, root causes are identified, and process changes are made.

What it covers | A standing quality review agenda item in every sprint retrospective covering: recurring PR review comments (patterns indicate standards gaps), production errors attributed to the sprint, test coverage trend, CI pipeline failure rate, and one specific process improvement to implement in the next sprint. Quality metrics are tracked sprint over sprint. |

How to implement | Add a quality dashboard to the sprint retrospective: PR approval rate, average review turnaround, test coverage delta, production error count, and CI failure rate. Review trends, not just individual data points. If the same type of PR comment recurs across three sprints, the standards document needs updating, not another review comment. The retrospective is where patterns become process changes. |

What bad looks like | Retrospective covers velocity and features only. Quality metrics are not tracked. The same code review comments appear sprint after sprint with no process change. Production errors repeat without root cause analysis. Quality problems accumulate rather than being systematically resolved. |

Quality Metrics to Track Every Sprint

These are the specific metrics that tell you whether the 6-layer framework is working. Review them in every sprint retrospective and track the trend across sprints.

Quality Metric | What Good Looks Like | What Bad Looks Like |

PR first-time approval rate | 70%+ approved without revision cycles | Below 50%; most PRs require multiple rounds |

Code review turnaround | Within 24 hours during overlap window | Frequently exceeds 48 hours |

Test coverage on new code | 70%+ on all new feature code | Below 50% or declining sprint over sprint |

CI pipeline pass rate | 90%+ first-pass pipeline success | Frequent pipeline failures; bypass patterns emerge |

Production error rate | 0 new error types per sprint | New error types each sprint; repeat errors |

Sprint scope completion | 90%+ of committed scope delivered | Regular incomplete scope blamed on complexity |

Technical debt log | Documented, prioritised, shrinking | No log; debt addressed reactively in incidents |

Review comment type | Majority architectural and logic | Majority style and formatting (should be automated) |

When to Implement Each Layer

All 6 layers should be in place before Sprint 1 starts. If the engagement has already begun without them, implement in order of impact. The sequence below reflects implementation priority for a team that is starting from scratch mid-engagement.

Before Day 1 | Layer 1 (Standards) and Layer 4 (CI/CD pipeline) These must exist before the developer writes a single line. Standards without enforcement are aspirations. CI without standards has nothing to enforce. |

Week 1 | Layer 2 (Code review process) and Layer 3 (Testing requirements) Establish the review process before the first PR is submitted. First PR reviewed with the full checklist sets the expectation for every subsequent review. |

End of Sprint 1 | Layer 5 (Production monitoring) Configure before anything ships to production. Monitoring configured after the first production deployment is monitoring that missed the first deployment. |

Sprint 2 onwards | Layer 6 (Retrospective quality review) Requires at least one sprint of data to review. Add the quality metrics agenda item to the Sprint 2 retrospective and run it every sprint thereafter. |

Red Flags in a Vendor's Quality Claims

When evaluating an offshore development provider, these are the quality claims to probe. Use them alongside the 15-point Laravel agency vetting checklist for a complete evaluation.

Claim 1: We maintain 100% code quality

Reality: Code quality is measurable. Ask for specific metrics: PR approval rate, test coverage percentage, CI pipeline pass rate, production error frequency. A vendor who cannot answer with specific numbers does not track these metrics.

Claim 2: We have a dedicated QA team

Reality: Ask what the QA team does specifically. If QA is a separate phase at the end of the sprint rather than integrated throughout, the defect discovery cost is 5x higher than integrated QA. Dedicated QA and integrated QA are not the same thing.

Claim 3: Our developers are self-reviewing

Reality: A developer reviewing their own PR is not a code review. It is a second read. Independent peer review by a different developer is the minimum for a meaningful review process. Self-review of PRs is an immediate red flag.

Claim 4: We follow best practices

Reality: Ask them to define best practices specifically for Laravel: service class architecture, Eloquent query optimisation, queue management, and testing patterns. Vague answers indicate the best practices are aspirational rather than operational standards with enforcement mechanisms.

Claim 5: We have zero defects in production

Reality: Every application in production has defects. The claim of zero defects means the vendor is not monitoring production or is not disclosing incidents. Ask to see their production error tracking setup and their incident response process.

Claim 6: Client reviews code before it ships

Reality: Client code review is not a vendor quality process. It transfers the quality responsibility from the vendor to the client. A vendor whose quality process depends on the client reviewing every PR has no internal quality process.

How Acquaint Softtech Applies the 6-Layer Framework

Our 95% sprint delivery rate and 34 five-star Clutch reviews are the output of applying all 6 layers as operational standards across every staff augmentation and Laravel development engagement. Here is what each layer looks like in practice at Acquaint Softtech.

Layer 1: Standards

We maintain a shared Laravel standards document across our team. Every new developer reviews it before their first PR. It is updated whenever the team adopts a new pattern. It is linked in every PR review comment that references a standards issue.

Layer 2: Code review

Every PR requires review by a senior developer who was not involved in writing the code. We target 24-hour turnaround. Review comments reference the standards document. We track first-time approval rate per developer and per engagement.

Layer 3: Testing

We require tests for all new service class methods and all API endpoints. Coverage thresholds are configured in the CI pipeline and enforced before merge. Bug fixes include a regression test that would have caught the original bug.

Layer 4: CI/CD

GitHub Actions runs on every push: PHPStan at Level 5, Laravel Pint, the full test suite, and dependency vulnerability scanning. The pipeline cannot be bypassed. We review pipeline failure patterns in the sprint retrospective.

Layer 5: Monitoring

Sentry is configured before the first production deployment. New error types trigger an immediate Slack alert. We review the production error log in every standup and every sprint retrospective.

Layer 6: Retrospective

Every sprint retrospective includes a quality metrics review: PR approval rate, test coverage delta, production error count, and one specific process improvement. Quality trends are tracked across sprints, not just individual sprints.

Book a Free QA Process Review Call

Tell us about your current offshore engagement and the quality problems you are experiencing.

We will map your setup against the 6-layer framework and identify the specific missing layer causing the issue.

Quality Is a System, Not a Standard

A coding standard without enforcement is an aspiration. A code review without a defined process is a favour. Testing without a coverage requirement is optional. CI/CD without quality gates is a deployment tool.

The 6-layer framework works because each layer depends on the one before it and enforces the one after it. Standards give the reviewer something to reference. The review process ensures standards are applied. Testing requirements give the CI pipeline something to measure. The CI pipeline enforces testing. Monitoring connects code quality to production outcomes. The retrospective closes the feedback loop by converting patterns into process changes.

If you want to evaluate whether your current offshore engagement has these layers in place, we offer a free 30-minute quality process review. We will assess your current setup against the 6-layer framework and identify the specific gaps that are generating your quality problems. Hire Laravel developers or explore our staff augmentation model to start with the framework already in place.

FAQ's

-

How do you ensure code quality from an offshore development team?

Through a structured 6-layer process: written coding standards acknowledged before Day 1, a formal code review process with defined criteria and turnaround expectations, automated testing requirements enforced as a merge condition, a CI/CD pipeline with quality gates that block merges on failure, production monitoring with immediate alerts for new error types, and a sprint retrospective quality review that converts recurring patterns into process changes. Each layer must be in place before the engagement starts, not added reactively when quality problems appear.

-

What is the most common cause of poor code quality from offshore developers?

The absence of a defined code review process. In our experience across 1,300+ projects, the most reliable predictor of offshore code quality problems is not developer skill level or timezone gap. It is whether there is an independent peer review process for every PR, with specific criteria and a defined turnaround expectation. Engagements without structured peer review consistently produce lower quality output than engagements with it, regardless of developer seniority.

-

What code review process works best for offshore teams?

A written checklist attached to every PR, reviewed by a developer who was not involved in writing the code, with a maximum 24-hour turnaround expectation during the timezone overlap window. The checklist should cover standards adherence, test coverage, security considerations, and performance implications. Review comments should reference the standards document, not just identify a problem. One approved review from a named senior developer before merge is the minimum viable process.

-

How do you handle code quality in a team with significant timezone differences?

Through async-first quality processes. Code review does not require real-time collaboration. A developer in India submits a PR at the end of their day. A reviewer in the UK reviews it at the start of their day. With a 24-hour turnaround expectation, this works efficiently. The CI pipeline runs automatically regardless of timezone. Production monitoring alerts are received regardless of timezone. The sprint retrospective is the one synchronous quality review event per sprint. Timezone gaps require async quality process design, not in-person equivalence.

-

What automated tools should be used for Laravel code quality?

PHPStan or Larastan for static analysis (start at Level 3 and increase over time), Laravel Pint or PHP CS Fixer for code style enforcement, PHPUnit with a minimum coverage threshold for testing, Dependabot or equivalent for dependency vulnerability alerts, and Sentry for production error tracking. These tools should be configured in GitHub Actions or GitLab CI and set to block merges on failure. The combination of static analysis, style enforcement, and test coverage requirements catches the majority of quality issues before a human reviewer sees the code.

Table of Contents

Get Started with Acquaint Softtech

- 13+ Years Delivering Software Excellence

- 1300+ Projects Delivered With Precision

- Official Laravel & Laravel News Partner

- Official Statamic Partner