The Complete Guide to InsurTech Software Development in 2026

InsurTech software is not a fintech variant with policy fields. It is a policy-centric, regulator-accountable platform that runs quote, bind, issue, endorse, pay, and report as one system.

Acquaint Softtech

This 2026 playbook explains the architecture, the cost, the compliance map, and the exact decision that separates a build from a buy.

- Insurance product and engineering leaders planning core systems, claims, or underwriting platforms.

- InsurTech founders comparing custom builds with Guidewire, Duck Creek, Majesco, or Sapiens.

- MGA operators needing fast product rollout and scalable insurance software.

- Investors and board members evaluating insurance tech plans, feasibility, and cost.

Most insurance platforms fail quietly before the first policy is written. At Acquaint Softtech, a software partner that has shipped 1,300+ projects across 13 years for carriers, MGAs, and InsurTech founders in the US, UK, Australia, and the UAE, we have seen the same root cause every time: the team treated the insurance contract like a fintech account and the regulator like a content reviewer. The cost surfaces late, usually as a loss ratio miss, a regulator query, or a product launch that slips a full quarter, and by then, the rebuild is more expensive than the original. This playbook sets out the architecture, the engagement model, and the decision framework we use on every insurance platform build and software product development engineering engagement, so the category error above never becomes yours.

An insurance platform is a legal instrument that happens to run on software. Every object in it (the policy, the endorsement, the reserve, the cession, the bordereau) exists because a regulator requires it to exist, in a format the regulator requires, with a retention period the regulator sets. The NAIC model laws that US states adapt into local statute are the clearest illustration of this: they shape rate filings, annual statements, and market conduct exams down to the field level. That constraint is the design input, not a compliance checkbox at the end. Teams that internalise this ship platforms that scale. Teams that do not ship prototypes that stall at the first audit.

“The teams who ship working InsurTech platforms treat compliance as a data model question, not a lawyer question.” - Senior Solution Architect, Acquaint Softtech

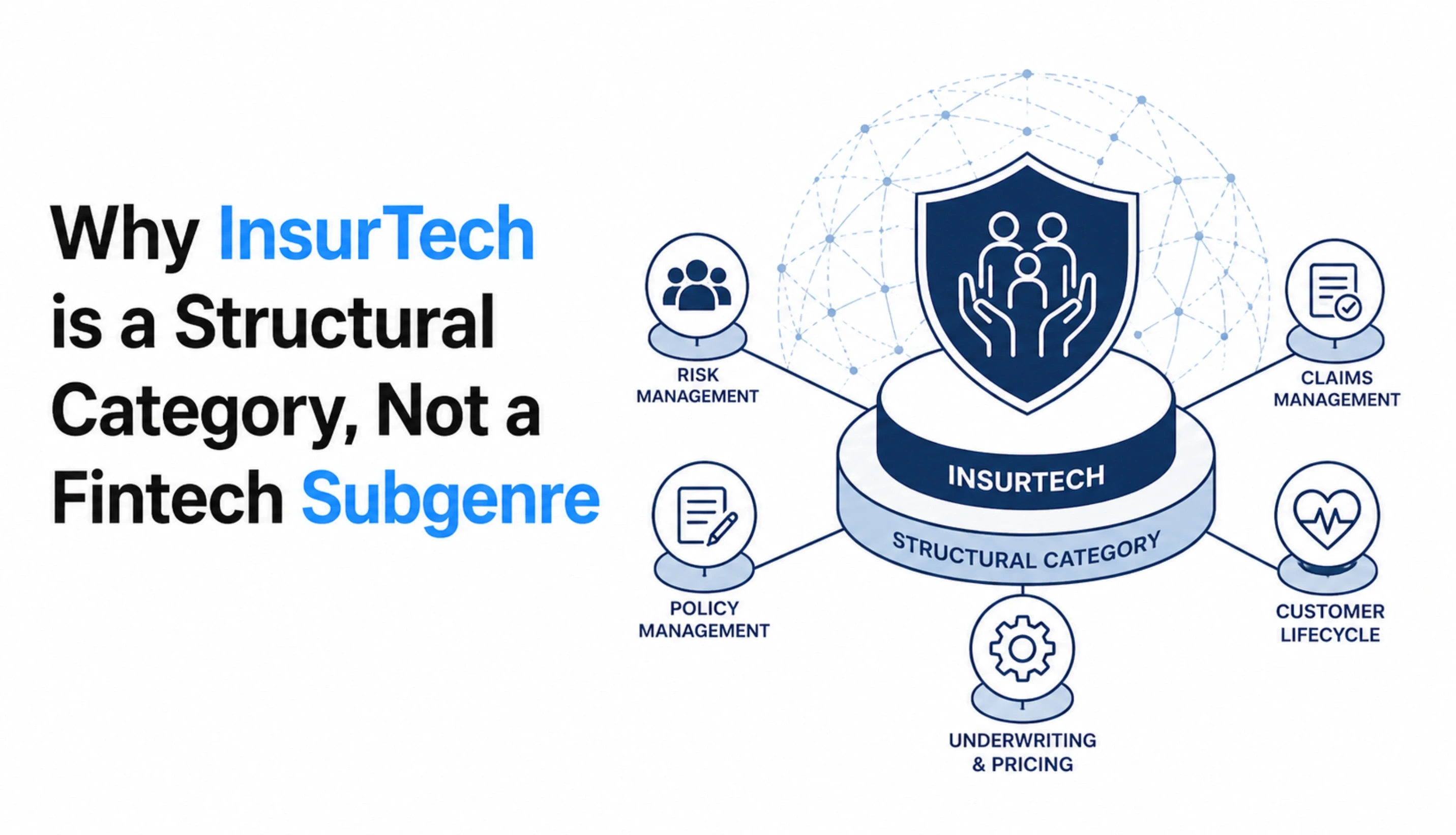

Why InsurTech Is a Structural Category, Not a Fintech Subgenre

InsurTech and fintech share a delivery aesthetic (APIs, mobile, SaaS), but the two stacks solve different problems. A fintech stack moves money from one account to another. An insurance stack makes a contingent promise, reserves capital against it, and pays a variable amount on an uncertain future event, under prudential rules that change state by state and year by year.

Most founders who come to us from a fintech background spend the first two weeks unlearning patterns that worked in a payments context and pattern-match badly onto an insurance context. The learning curve is short but unavoidable, and skipping it produces platforms that read well in a deck and fall apart in front of an examiner.

The Policy Is the Primary Entity, Always

Every table, every event, every report in an insurance platform hangs off the policy. The insured is a relation on the policy. The risk is a relation on the policy. The premium is a relation to the policy. So is the endorsement, the claim, the reinsurance cession, and the document archive.

A fintech data model starts with an account and treats transactions as children. An insurance data model starts with a policy and treats everything, including the customer, as a relation. Teams that borrow the fintech model and retrofit policy fields discover the mistake at the first cancellation or rewrite, when the data no longer reconciles.

Time Is a First-Class Attribute, Not a Timestamp

An insurance transaction has at least three dates that matter: the transaction effective date, the transaction booking date, and the rate version date. A mid-term endorsement applied today for a loss that happened last month reads the policy as it existed on the loss date, rates against the table in force then, and books a financial entry dated today.

A fintech account needs a timestamp. An insurance policy needs a time engine. If the team cannot draw, on a whiteboard, the exact sequence of dates used when calculating a retroactive endorsement, the time model is not yet ready for production. Every failure we have investigated around premium leakage or under-reserving traces back to this same gap.

The Audit Log Is the Product, Not a Feature

When an examiner asks why a claim was reserved at a certain amount on day 14 and revised on day 62, the answer is not a log snippet. It is an immutable, queryable, timestamped record that names the actor, the prior state, the new state, and the rule or reason. That record is also what you send to a reinsurer during a treaty audit, what you file with the NAIC during a market conduct examination, and what underpins every Solvency II pillar 3 disclosure.

The NAIC Market Conduct Record Retention Model Act is explicit that an insurer shall maintain its books, records, and documents in a manner so that the commissioner can readily ascertain during an examination the insurer's compliance with state insurance laws, and the UK government's Solvency II explanatory guidance draws the same hard line on transparency, supervisory reporting, and public disclosure. None of that works without a clean, traceable underlying record. Bolting it on in sprint 28 does not work. It is built in sprint 1, or it is never built correctly.

A policy-centric data model retrofitted onto an account-centric system costs roughly 3 to 5 times what a clean build would have cost in year one. The mistake is usually made in week 2.

Want a Read on Whether Your Data Model Is Policy-Centric?

Send your current ERD or a sketch, and our solutions team will return a 2-page review within 48 hours, naming the three highest-risk assumptions and what they will cost to fix after go-live vs before. No commitment, no sales motion. We review 6 to 8 of these a month, so early requests go out first.

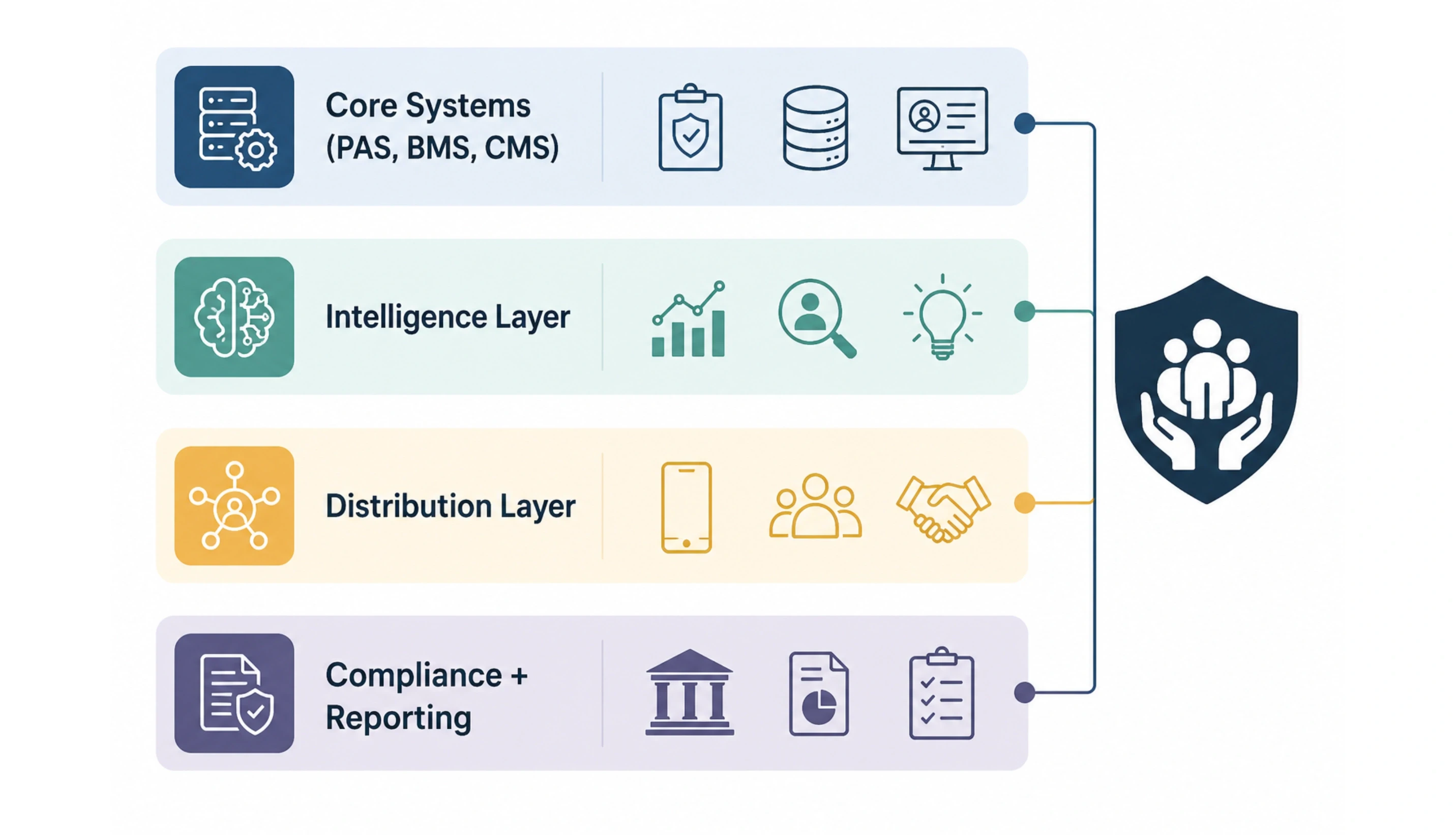

The Anatomy of a Modern Insurance Platform

A working platform in 2026 is organised in four layers, each with a clear owner, a clear interface, and a clear failure boundary. Confusing the layers is the most common reason InsurTech builds slip past 18 months and 2x budget. The map below is what we start with on every greenfield scoping call, and it is also the shape we help carriers migrate toward when they are moving off a mainframe. Founders who prefer to work this through with an engineer on the first call typically start with our discovery process, described in the discovery workshop services for regulated software platforms.

Layer | What It Owns | Typical Stack Patterns | Accountable Role |

Core Systems (PAS, BMS, CMS) | Quote, bind, issue, endorse, renew, bill, reconcile, FNOL, adjudicate, pay, subrogate | Laravel or Django services, PostgreSQL, event bus, per-service databases for blast radius | Head of Product + Chief Actuary |

Intelligence Layer | Document extraction, risk scoring, claims triage, fraud flagging, appetite matching, reserve estimation | Python ML services, vector stores, LLM integration with retrieval, human-in-the-loop review UI | Head of Underwriting + Head of Claims |

Distribution Layer | Policyholder portal, agent portal, embedded insurance API, claims mobile app, notifications | React or Next.js web, React Native or Flutter mobile, OAuth, partner-facing REST, and webhook APIs | Head of Distribution + CTO |

Compliance + Reporting | Audit trail, statutory reports, bordereaux, reinsurance settlements, data subject requests | Immutable event log, WORM document store, per-jurisdiction report generators from the event log | Chief Compliance Officer + Data Protection Officer |

The four layers communicate by event, not by direct call. The distribution layer raises a quote-requested event; the core layer handles it and publishes a quote-created event; the intelligence layer subscribes, scores the risk, and publishes a risk-assessment-completed event; the compliance layer records every step. This shape, sometimes called event-driven hexagonal architecture, is what makes a platform both testable and auditable. The main reason for it is replay.

Where the AI Actually Sits

Founders often ask where the AI goes in the architecture. The answer is the intelligence layer, never inside the core. Underwriting AI, claims triage, fraud detection, and document extraction are all inference services that subscribe to core events, produce a scored recommendation, and publish that recommendation back.

The core never asks the AI a question synchronously, because an AI outage must never block a bind, a payment, or a claim. This separation also means the AI can be swapped, retrained, or audited independently of the policy lifecycle, which matters when regulators ask to see the training data, the decision logs, and the override rate for any AI involved in a material decision.

The Four Layers, by the Numbers

<100ms Core quote response target | 60%+ Underwriter time saved by AI | 200ms Embedded API SLA at partner sites | 7+ yrs Typical audit-log retention |

Claims Automation: From FNOL to Payout in Hours, Not Days

Claims are where an insurance platform either earns or destroys its reputation. Every other workflow, quote, bind, and renew is a promise. A claim is the moment that a promise is tested. A modern claims engine takes an FNOL at 2 am, verifies coverage against the policy as of the loss date, posts an initial reserve, runs fraud and subrogation screens, assigns the right adjuster, and acknowledges the insured, all before a human at the carrier has seen the notice. Done well, claims cycle time drops from 8 to 12 days to 6 to 30 hours for a clean first-party auto or property claim, while loss ratio and customer satisfaction both improve.

The Five Stages of an Automated Claim

A claim is not a ticket. It is a structured workflow with five stages, each with a defined handoff, a defined data write, and a defined audit entry. Skip any one of them, and the platform fails an examination later. The table below is the shape we implement on every claims module, with typical automation coverage shown for a mid-complexity P&C book.

Stage | What Happens | Automation Coverage | Typical Cycle Time |

1. Intake (FNOL) | Web form, mobile app, voice intake, or broker portal captures loss details; claim record created with auto-generated number | 95% automated | Under 3 minutes |

2. Coverage Verification | System reads the policy as of the loss date, validates coverage, limits, deductibles, and exclusions | 100% automated | Under 10 seconds |

3. Reserve + Triage | AI triage scores severity, sets initial reserve, flags fraud signals, routes to adjuster queue by skill | 70% automated | Under 5 minutes |

4. Adjudication | Adjuster reviews evidence, orders inspections or medical records, approves or denies with documented reasoning | 30% automated, 70% human | Hours to days |

5. Payout + Subrogation | Payment issued via ACH, card, or cheque; subrogation opened against third party if applicable; audit log sealed | 90% automated | Under 60 minutes |

6 hrs Target cycle time | For a clean first-party auto or property claim with complete documentation and no fraud flags, a well-built claims engine closes the full FNOL-to-payout loop in 6 to 30 hours. The industry average on legacy systems sits between 8 and 14 days. |

What Separates a Real Claims Engine From a Workflow Tool

Many platforms badged as claims systems are ticketing tools with insurance labels. A real claims engine handles three things that a ticket tracker cannot: reserve accounting, reinsurance cession, and subrogation ledgers. Reserves move money on the balance sheet, not just a status field on a record.

Reinsurance recoveries need to flow through the cession table the moment a claim crosses a treaty attachment point. Subrogation runs as a parallel ledger that can outlive the underlying claim by years. If these three are missing, the platform will fail an actuary review and cause loss ratio drift within six months of go-live, regardless of how slick the intake UI looks.

The Fraud and Subrogation Moves Most Platforms Skip

Two categories of automation pay for themselves within the first 12 months. Fraud detection runs on every FNOL: network analysis against past claims, duplicate-invoice checks, staged-loss indicators, and linkage to watchlists such as ISO ClaimSearch. A mid-sized auto carrier typically identifies 3% to 6% of claims as worth investigation, recovering 1.5% to 3% of gross incurred.

Subrogation automation flags every third-party-at-fault loss, opens a sub-claim ledger, and tracks recovery against the adjuster's target. Carriers that run both see a 40 to 80 basis-point loss ratio improvement in year one, which is usually enough to fund the entire claims engine build on its own. These automations are a core part of every AI development services engagement we run for claims and fraud workflows, and the mobile intake layer is typically built as part of a mobile app development workstream that runs in parallel with the core claims engine.

What 'Good' Looks Like on a Claims Engine

Coverage verification is deterministic, reproducible, and logged with policy version and effective date

Initial reserve is posted within 5 minutes of FNOL for 95% of claims

Fraud screening runs on 100% of notices, not just flagged ones, with the score stored on the claim

Reinsurance cession is calculated the moment a treaty attachment is crossed, not at month-end

The subrogation ledger is opened automatically for every third-party-at-fault loss

Every status change, reserve movement, and payment is written to an immutable audit log

Adjuster workload is rebalanced nightly based on complexity scores, not alphabetical assignment

The 12-Point InsurTech Build Readiness Checklist

A printable one-page audit that scores your current insurance platform on data model, audit trail, rating engine, claims workflow, reinsurance accounting, and compliance readiness. Used by carriers and MGAs in the US, UK, Australia, and the UAE before scoping a build.

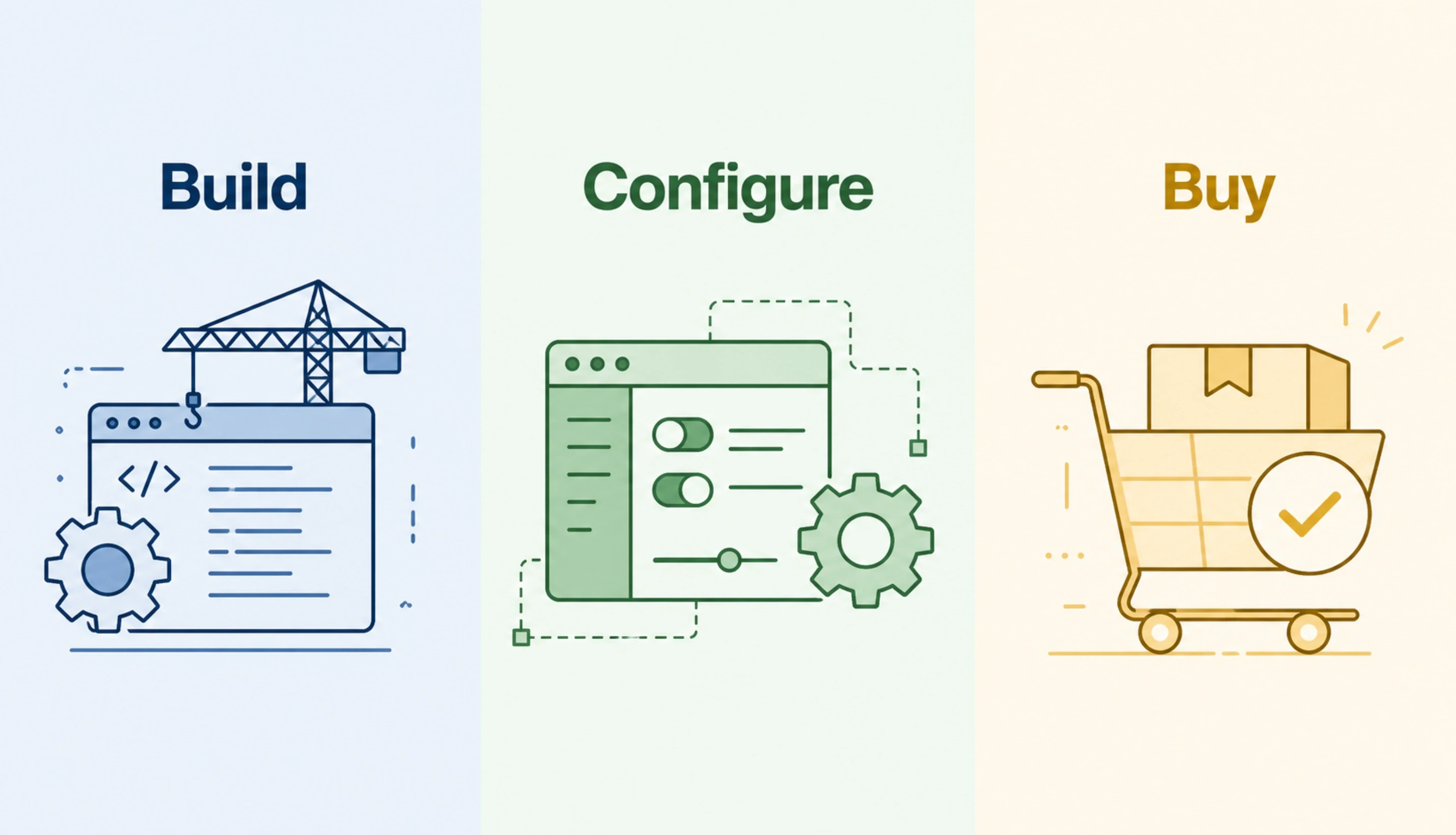

Build vs Configure vs Buy

There are three legitimate paths into a modern insurance platform. Build means a custom system engineered for your specific product line, distribution model, and regulatory footprint. Configure means licensing a commercial core (Guidewire, Duck Creek, Majesco, Sapiens, Socotra) and tailoring it to your needs.

Buy means adopting a vertical SaaS that already runs a specific class of business, for example, a direct-to-consumer pet insurance stack. The wrong path costs two years, not two quarters, and the cost seldom shows up in year one.

Decision Dimension | Build Custom | Configure Commercial Core | Buy Vertical SaaS |

Best fit | Specialty MGA, embedded, parametric, on-demand, niche commercial, rapid reconfiguration | Standard personal or commercial lines at scale, multi-state US carriers | Single-line direct-to-consumer play with no appetite for engineering |

Year-one spend | $220,000 to $1.2M depending on scope | $1M to $5M licence plus implementation partner | Per-policy fee, no upfront build cost |

Time to first policy | 9 to 14 months for a focused MVP | 12 to 24 months for a non-standard product | 4 to 8 weeks |

Product flexibility after launch | High: new product line in 6 to 10 weeks | Moderate: constrained by the vendor's data model | Low: whatever the vendor ships is what you get |

Who owns the IP and data | Client owns everything from day one | Client owns data, vendor owns platform IP | Vendor owns both IP and platform |

If three or more rows point to build, a custom platform is the right structure. If three or more rows point to configure, a commercial core is the right structure. If the business model is a single line of direct-to-consumer insurance and you have no engineering appetite, vertical SaaS is the right structure. Most founders who ask us do not fit cleanly into one column on day one, which is why we run a paid two-week discovery before any build engagement to pin the answer down.

Underwriting AI: The Intelligence Layer That Actually Ships

Underwriting is the second-hardest job in insurance and the one that most benefits from well-built AI. A submission arrives as a 40-page packet: ACORD forms, loss runs, property schedules, motor vehicle records, financial statements, and inspection photos. A human underwriter reads all of it, extracts risk attributes, checks them against the carrier's appetite, rates the risk, and produces a quote or a decline.

The process takes 90 minutes to 4 hours on a commercial submission. A well-built underwriting AI reduces that to 8 to 15 minutes of human review on top of 45 seconds of machine work, without giving up the audit trail or the regulator-facing reasoning.

What Underwriting AI Actually Does

The intelligence layer performs five tasks end-to-end on a submission, each auditable and each swappable. Document extraction parses ACORD forms and loss runs into structured attributes. Risk scoring runs the structured attributes against the carrier's appetite model and returns a score with the exact contributing factors.

Appetite matching flags submissions that fall outside the carrier's current writing rules. Reserve estimation predicts ultimate loss on any flagged exposure. Fraud screening checks linked entities against watchlists and prior-claim networks. Every output carries a confidence score and a reason string, and nothing binds without a human approval when the confidence or the stakes are below the carrier's defined thresholds.

The Five Tasks of a Production Underwriting AI

01 Document Extraction ACORD forms, loss runs, and schedules | 02 Risk Scoring Attributes vs. the appetite model | 03 Appetite Match Writing rules vs submission | 04 Reserve Estimate Ultimate loss on flagged risks | 05 Fraud Screen Watchlists + network checks |

The Referral-First Pattern (And Why Regulators Accept It)

Every material decision in insurance, bind, decline, pay, or deny, must be traceable to a licensed human under every major regulatory regime. The practical shape that satisfies both the commercial speed goal and the regulatory rule is the referral-first pattern. The AI proposes a decision with a confidence score and a reason string. If confidence is above the threshold for that risk class, the AI's recommendation goes to the agent portal as an indicative quote; a human underwriter reviews only the outliers.

If confidence is below the threshold, or the premium or limit exceeds the auto-bind cap, the submission routes to a human for full review. Overrides are logged, classified, and fed back into the next model version. This is the pattern that carriers deploying AI this way see measurable cycle-time and loss-ratio improvement from. The ones that deploy AI as a replacement for the human run into findings on the first market conduct examination.

Model Operations and Audit

A production underwriting AI is not a model; it is a model lifecycle. Every model version is tagged, every training dataset is archived, and every inference call logs the input, the output, and the model version. When a regulator asks how a specific submission was scored in Q2 of last year, the platform replays the exact model version against the exact input and produces the exact output.

This is not aspirational. It is the only way to answer a disparate-impact query from a US state department of insurance, a fairness inquiry under the UK Consumer Duty, or an algorithmic-accountability question under EU AI Act rules. Building this into the pipeline from sprint 1 costs a few percent of the AI budget. Retrofitting it after a regulator query costs 10x that number and delays the answer by quarters. Teams that want to go deeper on the intelligence-layer architecture typically start with a focused AI development engagement for underwriting and claims workflows, and the Python services that power the model layer are built and maintained by the same team through our Python development company practice.

The Measurable Outcomes Carriers Track

A well-built underwriting AI typically produces four measurable year-one outcomes on a commercial or specialty book: submission cycle time falls by 50% to 70%, quote-to-bind ratio improves by 2 to 5 percentage points because submissions are routed faster, loss ratio improves by 30 to 90 basis points as the appetite model catches adverse-selection drift earlier, and underwriter capacity expands by 40% to 60% without additional hiring.

For carriers running 20,000 or more submissions a year, those four numbers together justify the build many times over before the end of year two. Teams that want to go deeper on the intelligence-layer architecture typically start with a focused AI development engagement for underwriting and claims workflows.

60% Underwriter capacity gain | On a commercial or specialty book running 20,000+ submissions a year, a production underwriting AI typically expands effective underwriter capacity by 40% to 60% without additional hiring, while improving loss ratio by 30 to 90 basis points. |

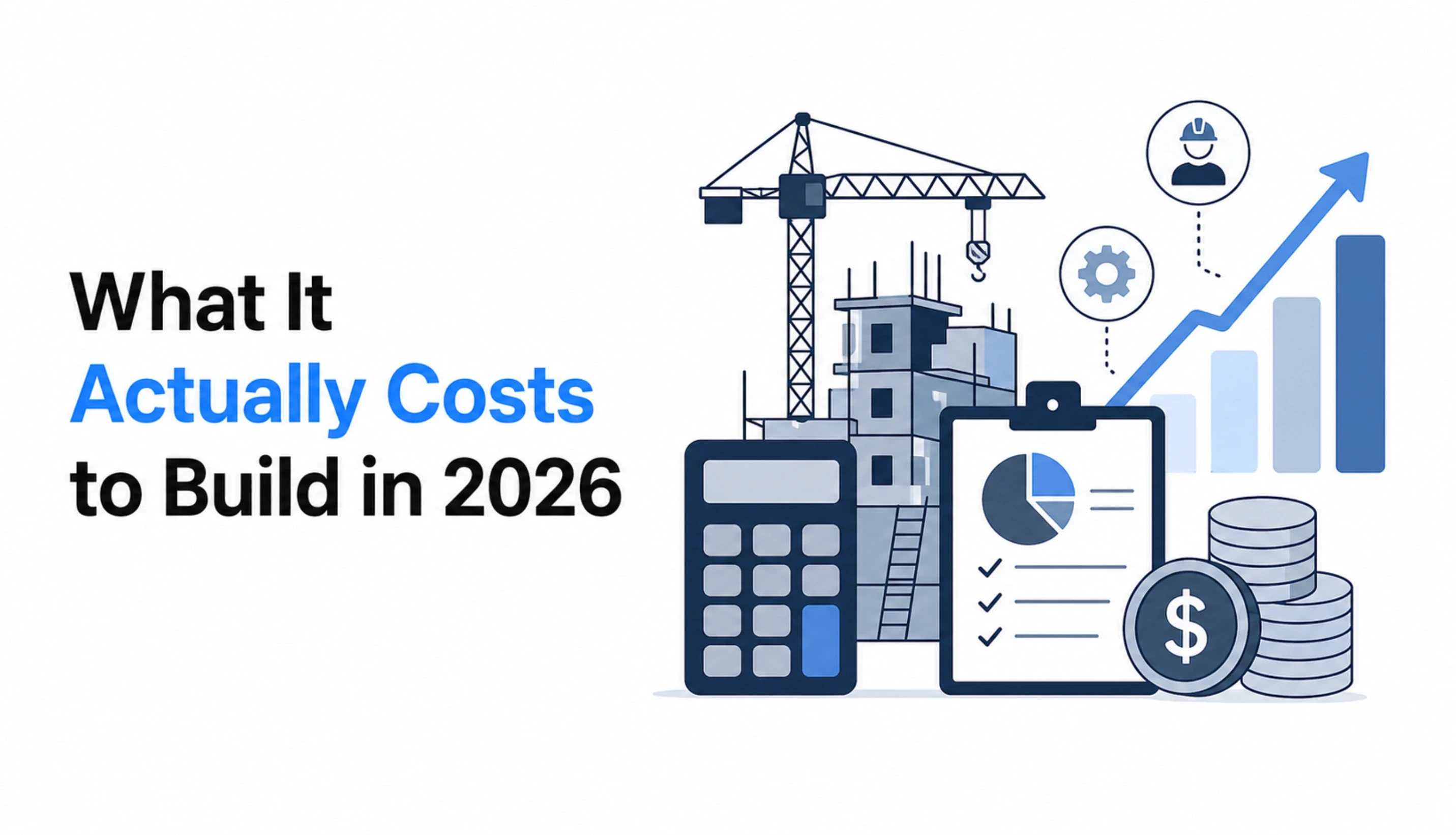

What It Actually Costs to Build in 2026

Cost depends on three variables: scope of core systems, number of product lines and geographies, and engagement model. The table below shows realistic ranges for a dedicated team engagement producing a production-grade platform. All numbers are USD, drawn from delivery operations across 1,300+ projects, and the same structure applies whether the work runs through an IT staff augmentation arrangement or a fully managed team.

Engagement Size | Monthly Team Cost | In-House Equivalent | Annual Saving | Client Oversight |

Small MVP (1 product line, 1 geography): 4 engineers + PM + QA | $13,000 to $20,000 | $35,000 to $48,000 | $260,000 to $340,000 | 1 to 2 hrs/wk |

Medium (full core, 2 to 3 lines): 7 engineers + PM + QA + DevOps | $22,000 to $34,000 | $58,000 to $78,000 | $430,000 to $540,000 | 3 to 4 hrs/wk |

Large (multi-geo, multi-line, AI layer): 12+ engineers + PM + QA + DevOps + Architect | $40,000 to $62,000 | $95,000 to $135,000 | $660,000 to $870,000 | 5 to 6 hrs/wk |

Read across any row, and the savings against the equivalent in-house cost sit close to 40%, driven by lower employer overhead, no recruiter fees, and no bench cost between projects. In-house numbers assume US market salaries loaded with benefits, PTO, retirement match, equipment, office, and a 20% recruiter fee amortised across 24 months of tenure. The contract should always state that the rate paid is the full rate, with no employer loading added later. It is a contract term, not a negotiation point.

What the Monthly Rate Includes

Full-time, dedicated engineers with average tenure above 24 months (not rotated or pooled)

A project manager and a QA engineer are allocated to the team, not billed on top

DevOps and cloud pipeline setup on AWS, Azure, or GCP, including CI/CD

Daily standups, sprint reviews, and monthly steering meetings in the client's time zone

Code ownership and IP transfer at every merge, not at project close

NDA and data-processing agreement signed before any engineer touches data

Replacement within 7 business days if any engineer underperforms, with full handover

40% Typical saving vs in-house | Across the three engagement sizes above, the partnered team model delivers roughly 40% lower annual cost than the equivalent in-house team in a US metro, before accounting for hiring time and bench risk. |

Want a Written Cost Estimate for Your Specific Scope?

Share your product line, geographies, integrations, and target launch date. We reply within 48 hours with a scoped proposal, a team structure, and a realistic timeline. You interview every engineer before engagement. No team is assigned without your approval.

The Hidden Line Items Most Founders Miss in Year Two

Build budgets are usually priced accurately for year one. Year two is where budgets break, and it is almost always because of the same five line items. Reserve between 18% and 25% of the original build cost for these every year, and year-two surprises disappear.

Integration Drift

Bureau data feeds (ISO, LexisNexis, Verisk), KYC providers, payment processors, reinsurer APIs, and e-signature vendors change format at least once a year, sometimes twice. Each change is a small piece of engineering. The sum of them is a measurable slice of a senior developer's time every quarter. Ignoring this produces a platform that fails silently as upstream partners roll out new fields.

Regulatory Reporting Cycles

Quarterly NAIC statements, half-yearly IRDAI returns, annual Solvency II pillar 3 disclosures, and ad-hoc market conduct exam submissions consume engineering hours every cycle. The first year, you build the reports. Every year, you maintain them against rule changes, and rule changes happen every year.

Rate Filing Implementations

If the product team files a new rate or a new factor, engineering implements it. A responsive MGA files rate changes monthly. That is twelve engineering changes a year on the rating engine alone, each one versioned, tested, and audit-logged. Budget the engineering time or budget the lost speed-to-market.

Audit and Examination Response

State DOI examinations, reinsurer treaty audits, and internal SOC reviews each generate a queue of data extracts and reconstruction queries. A well-built audit log cuts this to hours per examination. A poorly built one turns it into weeks of scrambling, and that time comes from the same engineers building your next product. The math rarely works.

Talent Retention Premium

Insurance engineering is specialist work. Engineers who understand both software and the insurance contract are in short supply, and retention costs (equity refreshes, salary top-ups, conference budgets) run higher than generic SaaS retention costs. Budget 10% to 15% above the generic market data for this line item, or budget the cost of replacing your senior engineer in month 14.

Five Hidden Year-Two Budget Lines

1 Integration drift | 2 Reporting cycles | 3 Rate filings | 4 Audit response | 5 Retention premium |

The Compliance Architecture: How Audit-Ready Platforms Are Actually Built

Many carriers handle year-two integration drift and reporting cycles through a structured support and maintenance retainer rather than absorbing the cost inside the core build team, and version changes to bureau feed schemas and framework dependencies are typically bundled into a version upgrade services engagement that runs on a fixed annual schedule.

The Four Regulators That Shape the Design

Every line of code in an insurance platform lives under at least one regulator. Four authorities cover roughly 95% of the compliance surface that a modern platform touches, and reading their primary material before the architecture review changes the architecture review. The table below maps each one to the design decision it drives.

Authority | Jurisdiction | What It Shapes in Your Build |

NAIC | United States (model laws adopted state by state) | Rate filings, market conduct exams, annual statements, statutory accounting principles |

IRDAI | India | Product approval, sandbox rules, data residency, insurer capital and solvency |

FCA | United Kingdom (conduct regulator for insurers and brokers) | Conduct of business, fair value assessments, product governance, and complaints handling |

EIOPA | European Union | Solvency II pillar reporting, reinsurance recognition, cross-border passporting, and IT resilience |

For US builds, the NAIC's market conduct regulation sets out the rate, form, and market conduct rules that every state adapts to local statute. Indian builds start with the sandbox and product filing framework published by the Insurance Regulatory and Development Authority of India, whose 2025 regulatory reforms, covered in detail on Mondaq, mandate electronic record-keeping, data localisation, and board-approved governance policies, which also govern data localisation and insurer solvency.

UK carriers and brokers read the conduct and product governance sections of the FCA handbook; the FCA's own thematic review on general insurance product governance makes clear exactly what fair-value assessment, complaints handling, and customer-outcome expectations look like in practice. EU carriers and anyone passporting into the Union should read the EIOPA guidance on DORA, which entered application and shapes both capital reporting and operational-resilience requirements across the insurance sector.

The Seven Components of an Audit-Ready Architecture

Every audit-ready platform we have shipped has the same seven components. Each one is implemented in the foundation sprint, not retrofitted later. Retrofitting any of them after go-live costs 5 to 10 times what building them into the foundation would have cost, and the migration window usually falls during an examination cycle, which is the worst possible time.

The Seven Components of an Audit-Ready Platform

Immutable event log:

every write captured with actor, timestamp, before-state, and after-state; append-only, never edited

WORM document storage:

every generated document (declarations, schedules, forms, letters) is stored with retention-period locks

Versioned rate and rule tables:

every filed rate preserved by effective date; the rating engine always reads the correct version for the transaction date

Report generators driven by the event log:

statutory filings, bordereaux, and regulator responses, all built from the same source of truth

Role-based access with full audit:

who saw which policy when, with read events logged alongside write events for sensitive data

Data residency boundaries:

explicit routing of regulated data to the required geography; IRDAI, GDPR, and state rules enforced at the infrastructure layer

Model audit trail for AI decisions:

training data archived, model version tagged, every inference call logged with input, output, and version

How Regulator Responses Actually Work in a Well-Built Platform

When a state department of insurance sends a market conduct exam notice, the standard first request is a claims sample with complete adjudication history. A legacy platform turns this into a three-week scramble: engineers pulling data from multiple systems, adjusters reconstructing timelines from email threads, and a compliance officer spending weekends formatting extracts.

A well-built platform turns it into a four-hour task: one query against the event log replays the exact state of each sampled claim at every inflection point, exports it in the examiner's preferred format, and attaches the supporting documents from WORM storage. Same exam, same questions, different architecture, two orders of magnitude different response cost.

What Compliance Costs to Build In vs Bolt On

Building compliance into the foundation typically adds 12% to 18% to the year-one engineering budget. Bolting it on after a regulator query typically costs 4x to 8x that amount, plus the reputational and capital cost of the finding itself. For a mid-sized carrier, the math is usually decisive within the first examination cycle.

The teams that resist building compliance in early are almost always the same teams we see rebuilding it in year two under much worse conditions, and the specific line items where the cost appears are the ones covered by our software product engineering services for regulated platforms.

For teams already past go-live who need to close compliance gaps without stopping product delivery, our software development outsourcing model provides a parallel squad that works the compliance retrofit without pulling engineers off the roadmap.

12-18% Cost to build in early | Embedding compliance into the foundation sprint typically adds 12% to 18% to the year-one engineering budget. Retrofitting the same components after a regulator query costs 4x to 8x that amount and arrives during the worst possible timeline. |

Want a Read on Your Compliance Architecture Before the Next Examination?

Send your current audit log design, your statutory report generation approach, and the regulators you file with. We return a 2-page architecture review within 5 business days, naming the three highest-risk gaps and what each will cost to fix before the next exam cycle, vs. during one. No sales motion, no commitment.

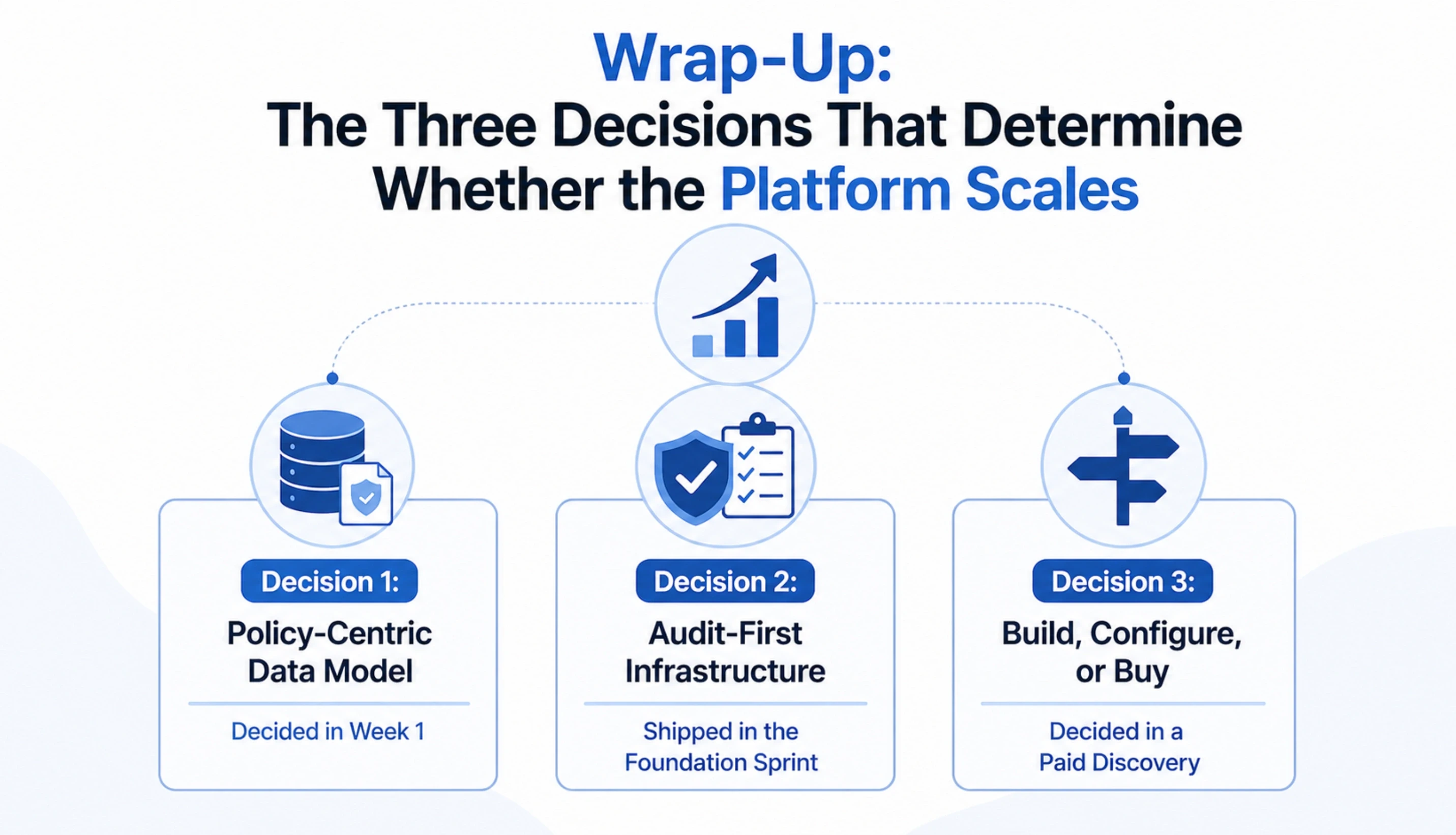

Wrap-Up: The Three Decisions That Determine Whether the Platform Scales

Dozens of decisions go into an InsurTech build. Three of them disproportionately determine whether the platform scales past the first 100,000 policies. Get these right in scoping, and the rest is tractable engineering. Get these wrong, and no sprint velocity will save the platform.

Decision 1: Policy-Centric Data Model, Decided in Week 1

The primary entity is the policy, not the customer or the account. Every query, every report, every regulator submission joins back through the policy. Teams that decide this in week 1 ship clean. Teams that decide it in week 30 rebuild.

Decision 2: Audit-First Infrastructure, Shipped in the Foundation Sprint

The audit log is the spine of the system, not a side-table. Every regulator report, every reinsurance settlement, every examination response is generated from the event log. Shipping this in sprint 1 makes everything after it possible. Bolting it on later does not work, and we have yet to see a single exception to that rule in 13 years.

Decision 3: Build, Configure, or Buy, Decided in a Paid Discovery

The three paths are not interchangeable. The wrong path is not a small inefficiency. It is 18 to 24 months of lost time and a seven-figure rebuild cost. A paid two-week discovery that forces the decision with real data is the cheapest insurance you can buy before you spend the first engineering dollar.

Founders who complete discovery and move to build typically engage through our MVP development company for the first 12 to 16 weeks, which produces a working platform against a single product line before the full core team is assembled.

Everything else in this playbook (the four-layer architecture, the 40-week delivery plan, the cost table, the hidden line items, the misconceptions) follows from those three decisions. Get them right, and the rest of the build is a series of solvable engineering problems. Get them wrong, and no amount of sprint discipline recovers the lost time.

The Three Decisions, Ranked by Leverage

01 Policy-Centric Data Model Decided in Week 1 | 02 Audit-First Infrastructure Shipped in Foundation Sprint | 03 Build, Configure, or Buy Decided in a Paid Discovery |

Ready to Run the Three Decisions With a Team That Has Done This Before?

A two-week paid discovery produces a written architecture, a team structure, a 40-week plan, and a fixed-fee proposal. If the answer is buy, not build, we say so and we walk away. If the answer is built, the discovery output converts directly into the first sprint.

Frequently Asked Questions

-

What is InsurTech and how does it work?

InsurTech is the category of software and business models that apply modern engineering, data science, and distribution methods to the insurance contract lifecycle. It works by re-architecting the four historical pillars of insurance (underwriting, policy administration, claims, and reinsurance) around a policy-centric data model, event-driven workflows, and real-time integrations with bureau data, payments, and document systems.

-

How is InsurTech different from fintech?

The data model, the regulatory surface, and the failure modes are all different. Fintech is account-centric and regulated mainly by banking authorities, PCI DSS, PSD2, and AML rules. InsurTech is policy-centric and regulated by state insurance departments in the US, IRDAI in India, FCA and PRA in the UK, and EIOPA in the EU, plus Solvency II, NAIC model laws, and ACORD data standards. A fintech failure is usually a transaction you can retry. An InsurTech failure is a mispriced contract or an under-reserved claim that compounds into a loss ratio problem quarters later.

-

How much does it cost to build an insurance platform in 2026?

A production MVP for a single product line in a single geography runs $220,000 to $450,000 and takes 9 to 14 months with a dedicated team of 4 to 6 engineers. A full core with 2 to 3 product lines runs $500,000 to $1.1M over 14 to 20 months. Multi-geography, multi-line platforms with an AI layer run $950,000 to $1.8M over 18 to 24 months. Two hidden costs most founders underestimate are integration, maintenance, and regulatory reporting; reserve 18% to 25% of the original build cost for these every year.

-

How do you build an insurance platform from scratch?

Start with a three-week discovery that nails the product line, target geographies, and the first 12 months of the distribution plan. Then ship the foundation sprint (policy data model, audit log, identity and access, event bus, deployment pipeline).

Then build the core lifecycle: quote-bind-issue first, then endorsements and renewals, then billing, then FNOL and claims. Then add the intelligence layer, the distribution surfaces, and the first regulatory filings. Run parallel production testing against a sample book before go-live. A 40-week timeline with a 6 to 9 engineer team produces a working platform ready for a first partner or carrier.

-

How is custom InsurTech software different from Guidewire or Duck Creek?

Guidewire and Duck Creek are configurable commercial cores built for standard personal and commercial lines at carrier scale. They ship with pre-built workflows and compliance reports that cover most traditional insurance products.

Custom software is engineered specifically for a product line, a distribution model, and a regulatory footprint, making it faster to reconfigure and better fitted to exotic or rapidly evolving products. For a standard carrier writing over 1M policies, commercial is usually cheaper over 7 years. For a specialty MGA, custom is usually cheaper by year 3.

-

How is NAIC, HIPAA, GDPR, and Solvency II compliance handled in a custom build?

Compliance is built into the foundation sprint, not bolted on before launch. The audit log captures every write with actor, timestamp, and before-and-after state, which satisfies the core documentation requirement under NAIC market conduct examinations, Solvency II pillar 3, and IRDAI inspections. HIPAA-covered health insurance builds add PHI encryption at rest and in transit, role-based access boundaries, and breach notification workflows. GDPR and CCPA add data subject access request handling, consent management, and right-to-erasure flows scoped to non-contractual data.

-

What happens to policy and claims data if the engagement ends?

The client owns the code, the data, the infrastructure, and all intellectual property from day one. IP transfer happens at every merge, not at project close. Source code lives in a client-owned repository, cloud infrastructure runs in a client-owned account, and databases are accessed by the development team under a revocable role. At engagement end, access is revoked, a full handover package is delivered, and the platform continues to run under the client or a successor partner without interruption.

-

What tech stack is best for a modern insurance platform?

There is no single best stack, but there is a consistent pattern. For the core services, a mature server-side framework with a strong type system and a well-understood data layer wins: Laravel, Django, or Node.js with TypeScript are all defensible, and the choice usually follows the team's existing strengths. PostgreSQL is the default database. An event bus handles cross-layer messaging. The intelligence layer runs on Python for model work. The distribution layer uses React or Next.js for web and React Native or Flutter for mobile.

-

How long does it take to integrate bureau data feeds?

A single bureau integration (ISO ClaimSearch, LexisNexis, Verisk, a state MVR feed) typically takes 3 to 6 weeks of engineering from first credentials to production. That includes the sandbox setup, mapping the incoming schema to your policy data model, handling the edge cases, and building the monitoring around it.

-

Is a custom build appropriate for a small MGA or broker?

Yes, and often it is the only path that makes commercial sense. A small MGA with 20,000 to 80,000 policies in a specialty line typically cannot justify the licensing cost of a commercial core. A custom platform on modern stacks, deployed on AWS or Azure, with a focused scope costs $250,000 to $600,000 to build and $4,000 to $9,000 per month to maintain.

Table of Contents

Get Started with Acquaint Softtech

- 13+ Years Delivering Software Excellence

- 1300+ Projects Delivered With Precision

- Official Laravel & Laravel News Partner

- Official Statamic Partner

Related Reading

Custom Healthcare Software Development: An End-to-End Guide

Custom healthcare software is revolutionizing the medical industry by providing tailored solutions that enhance patient care, streamline processes, and improve security. Discover the benefits, challenges, and step-by-step guide to building effective custom healthcare software. Learn how expert developers can help you stay ahead in the evolving healthcare landscape.

Mukesh Ram

February 27, 2025Healthcare & IT Staff augmentation - A success partnership

Healthcare IT staff augmentation bridges skill gaps and reduces workloads by hiring remote developers. This strategy enhances IT capabilities, ensuring smooth implementation and management of advanced technologies in healthcare organizations.

Mukesh Ram

August 6, 2024Proof of Concept: Why is it important in software development?

Discover how a Proof of Concept (PoC) can validate your project's feasibility, enhance stakeholder trust, and streamline development processes effectively.

Acquaint Softtech

April 28, 2025India (Head Office)

203/204, Shapath-II, Near Silver Leaf Hotel, Opp. Rajpath Club, SG Highway, Ahmedabad-380054, Gujarat

USA

7838 Camino Cielo St, Highland, CA 92346

UK

The Powerhouse, 21 Woodthorpe Road, Ashford, England, TW15 2RP

New Zealand

42 Exler Place, Avondale, Auckland 0600, New Zealand

Canada

141 Skyview Bay NE , Calgary, Alberta, T3N 2K6