Python Async Architecture How to Design APIs That Don't Block Under Load

Python async architecture for production APIs that never block under load. 2026 patterns, asyncio pitfalls, uvloop tuning, and proven event loop design.

Acquaint Softtech

Introduction: The Single Blocking Call That Brings Down Your Async API

Python async is the most powerful concurrency model available to backend engineers in 2026, and also the easiest to get subtly wrong. The async def keyword in front of your endpoint does not make it concurrent. It makes it a coroutine. If anything inside that coroutine blocks the event loop, the entire async architecture collapses into something slower than a synchronous app, because every request now waits for every other request before it can make progress. The framework does not warn you. The blocking call hides in plain sight, and production traffic finds it exactly when you can least afford it.

The cost of getting this wrong is real. According to a 2026 industry survey on Python async debugging published by johal.in, 68% of Python developers encounter asyncio blocking issues monthly, and 42% report production incidents directly caused by event loop blocking that cost over $10,000 per hour. The single-threaded event loop means any blocking operation halts the entire system, not just one thread, which is why a single bad library call can cascade into catastrophic failure under load.

This guide is the production-grade architecture playbook for Python async APIs in 2026. It covers how the event loop actually works, the patterns that keep it free, the patterns that quietly poison it, and the design discipline that separates an async stack that scales from one that breaks. It is written for senior backend engineers, tech leads, and CTOs who are either designing a new async system or pulling an existing one out of incident-driven firefighting.

If you are still building the team that will execute the async architecture, the complete guide to hiring Python developers in 2026 sets the wider hiring context. The patterns below assume you have engineers who can implement them safely and review them rigorously.

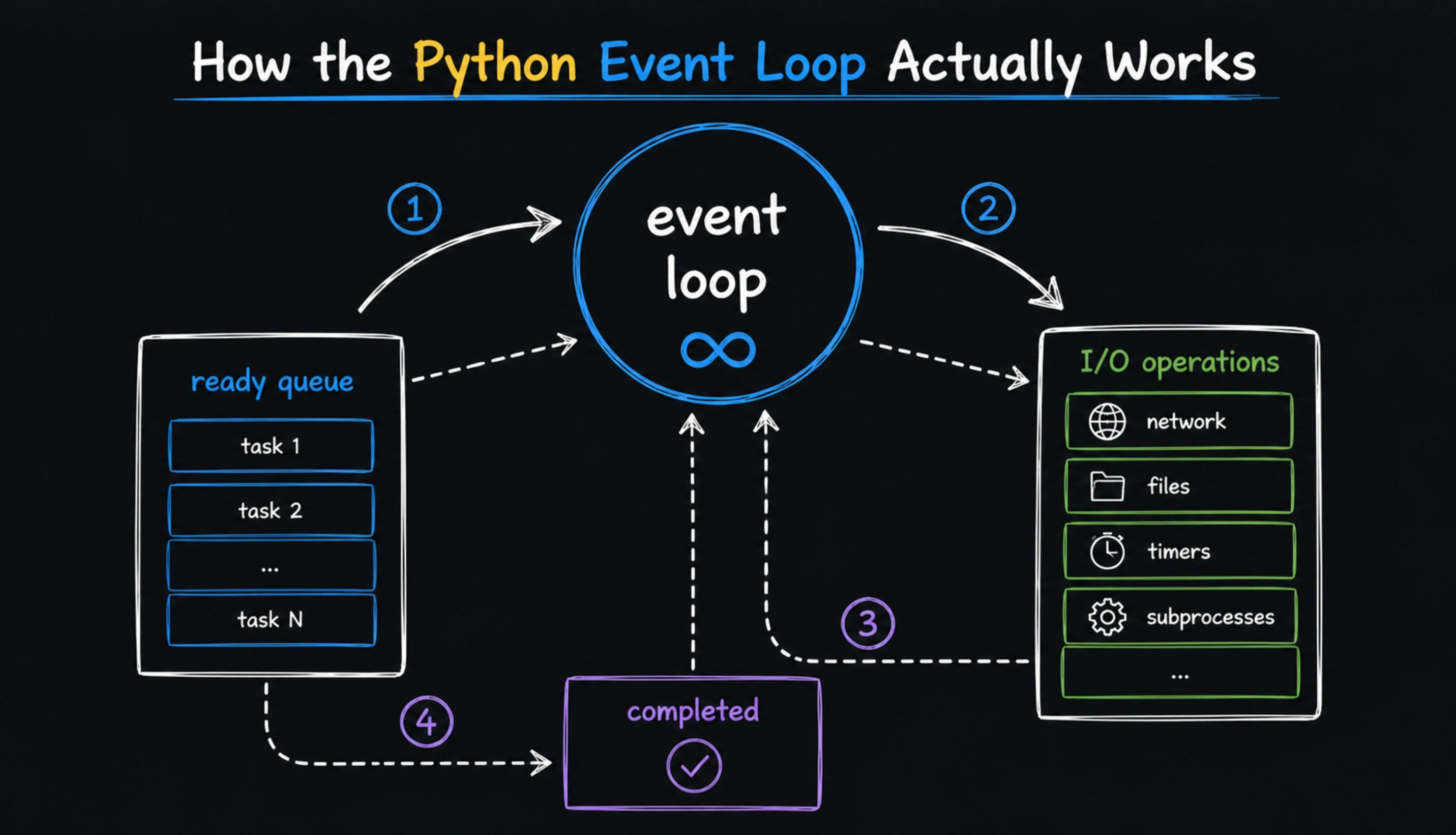

Foundation: How the Python Event Loop Actually Works

Most async bugs trace back to a misunderstanding of what the event loop does. It is not a thread pool. It is not parallel execution. It is a single-threaded scheduler that runs one coroutine at a time, switching between them only at await points. When a coroutine awaits, it yields control to the loop, which can then run another coroutine. When a coroutine blocks without awaiting, the loop has nowhere to go.

The Three Rules That Govern Every Async API

One event loop per worker process. Every Uvicorn worker runs a single asyncio loop. All async endpoints in that worker share the same loop and compete for its time.

Every request is a task. The loop interleaves tasks at await points, allowing thousands of concurrent requests to share one process. Without await points, no interleaving happens.

Async I/O is cooperative. Tasks must voluntarily yield control. A coroutine that does CPU work for 200ms blocks every other request for 200ms. There is no preemption.

Once these three rules are internalised, every architecture decision becomes clearer. The question stops being 'is this fast enough?' and becomes 'will this respect the event loop?'. The answer to the second question matters more than the first.

Three Async Myths That Cause Most Production Incidents

The recurring failure modes in async Python are remarkably consistent across teams. According to a 2026 production case study on FastAPI event loop blocking by TechBuddies.io, three misconceptions account for the majority of async incidents: 'if I use async def, it's automatically concurrent', 'more workers always means more throughput', and 'awaiting a sync function makes it non-blocking'. Each is wrong in a different way, and each is the root cause of incidents that would not have happened with one more code review pass.

Myth 1: async def Is Magic

async def only declares that the function returns a coroutine. If that coroutine internally calls a blocking library, the entire event loop stalls. Wrapping a synchronous database call in async def and awaiting it does not make the call async. It just lets you await something that still blocks.

Myth 2: More Workers Solve Everything

Spinning up more Uvicorn workers helps only if each worker uses the event loop efficiently. If every worker spends most of its time blocked on synchronous I/O, adding workers mainly adds overhead and memory use without improving throughput. Fix the blocking pattern first. Add workers second.

Myth 3: Background Tasks Are Free

asyncio.create_task with no tracking creates fire-and-forget tasks that can leak memory, stack errors silently, and exhaust the event loop under bursty load. Background tasks need bounded concurrency, error handling, and supervision. Free is the most expensive word in async architecture.

Pattern 1: Use Async-Native Drivers Everywhere I/O Happens

The single highest-impact rule in async architecture is to use async drivers for every I/O operation. A synchronous driver inside an async endpoint is the most common silent killer of FastAPI performance, because it works in development under low load and fails dramatically under production traffic.

Table : Async-Native Library Substitutes for Common Sync Tools

Workload | Sync (Blocks Loop) | Async-Native Replacement |

|---|---|---|

PostgreSQL | psycopg2 | asyncpg, psycopg3 async |

MySQL | PyMySQL | aiomysql |

MongoDB | pymongo | motor |

Redis | redis-py sync | redis-py async, aioredis |

HTTP outbound | requests | httpx, aiohttp |

File I/O | open() | aiofiles |

S3 / object storage | boto3 sync | aioboto3 |

ORM | Django ORM sync | Django async ORM, SQLAlchemy 2 async, Tortoise |

# THIS LOOKS ASYNC AND IS NOT

@app.get('/users')

async def get_users():

users = sync_db_client.fetch_all_users() # BLOCKS LOOP

return users

# THIS IS ACTUALLY ASYNC

@app.get('/users')

async def get_users():

users = await async_db_client.fetch_all_users()

return usersPattern 2: Run uvloop in Production for 2-4x Throughput

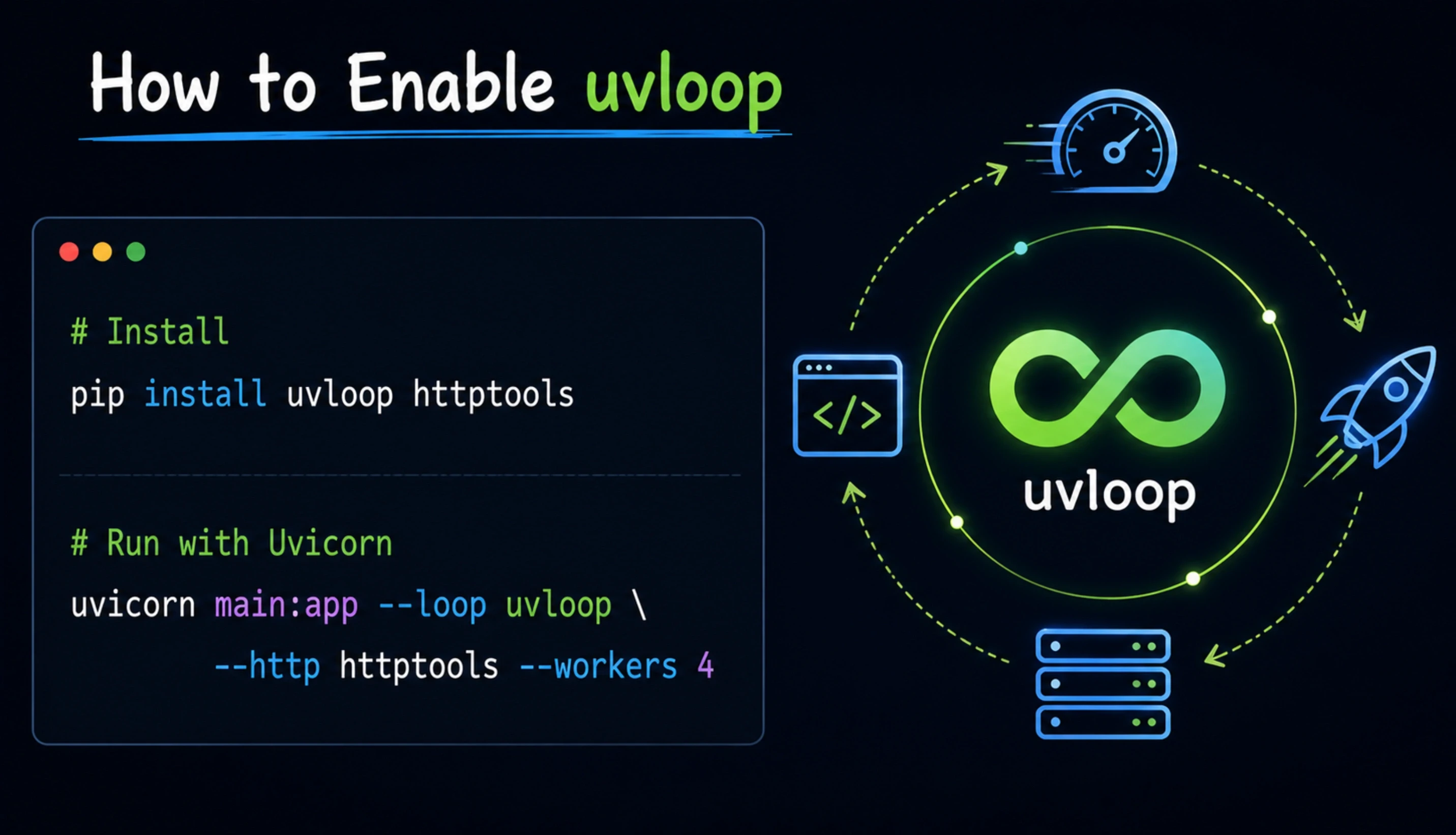

Python's default asyncio event loop is written in Python itself. A 2025 FastAPI performance analysis on DEV Community explains that uvloop replaces this with an event loop written in Cython and based on libuv, the same battle-tested C library that powers Node.js. Under high concurrency (1,000+ concurrent requests, common in production), uvloop handles 2-4x more throughput than the default asyncio loop because the overhead of managing all those concurrent operations is dramatically reduced.

How to Enable uvloop

# Install

pip install uvloop httptools

# Run with Uvicorn

uvicorn main:app --loop uvloop --http httptools --workers 4Pair uvloop with httptools (also Cython-compiled) for HTTP parsing and you have removed the two largest sources of pure-Python overhead in a typical async stack. The configuration change is one command line flag and the throughput improvement is meaningful at any production scale.

Pattern 3: Offload CPU-Bound and Legacy Sync Work Properly

Not every operation in your codebase will be async. CPU-heavy work, legacy SDKs that only expose blocking APIs, and certain validation pipelines will always be synchronous. The async-correct way to handle them is to offload them outside the event loop, never to call them directly inside an async endpoint.

Three Offloading Strategies, Ranked by Use Case

asyncio.to_thread() for occasional blocking calls. The simplest option for one-off legacy SDK calls or short CPU work. Runs the function in a thread pool managed by asyncio without blocking the loop.

ProcessPoolExecutor for heavy CPU work. Use loop.run_in_executor with a process pool for image processing, encryption, or any CPU-bound operation. Threads do not help with CPU due to the GIL; processes do.

Celery, RQ, or Dramatiq for true background work. If the work takes more than a few hundred milliseconds, do not offload inline. Send it to a worker queue and respond immediately to the client.

Practical Offload Pattern

# Bad: blocking call inside async endpoint

@app.get('/normalize')

async def normalize(payload: dict):

return cpu_heavy_normalize(payload) # BLOCKS LOOP

# Good: offload to thread pool

@app.get('/normalize')

async def normalize(payload: dict):

result = await asyncio.to_thread(cpu_heavy_normalize, payload)

return resultNeed Senior Python Engineers Who Have Shipped Async APIs at Scale?

Acquaint Softtech provides senior Python engineers with hands-on experience in asyncio, FastAPI, uvloop tuning, async PostgreSQL with asyncpg, Redis cluster integration, and Celery worker pipelines. Profiles in 24 hours. Onboarding in 48.

Pattern 4: Use TaskGroup for Structured Concurrency (Python 3.11+)

Before Python 3.11, the standard way to run multiple coroutines concurrently was asyncio.gather(). It works, but it has subtle failure modes: if one task raises an exception, others continue running, and cancelling them cleanly requires defensive code. Python 3.11 introduced TaskGroup, which provides structured concurrency with proper cancellation semantics out of the box.

Why TaskGroup Wins

# Old pattern (still works)

results = await asyncio.gather(coro1(), coro2(), coro3())

# New pattern (Python 3.11+, safer)

async with asyncio.TaskGroup() as tg:

t1 = tg.create_task(coro1())

t2 = tg.create_task(coro2())

t3 = tg.create_task(coro3())

# All tasks complete or all are cancelled togetherTaskGroup guarantees that if any task fails, all sibling tasks are cancelled, and the failure is propagated to the caller. This is exactly the behaviour you want for parallel I/O calls inside an endpoint. It eliminates an entire class of leaked-task bugs.

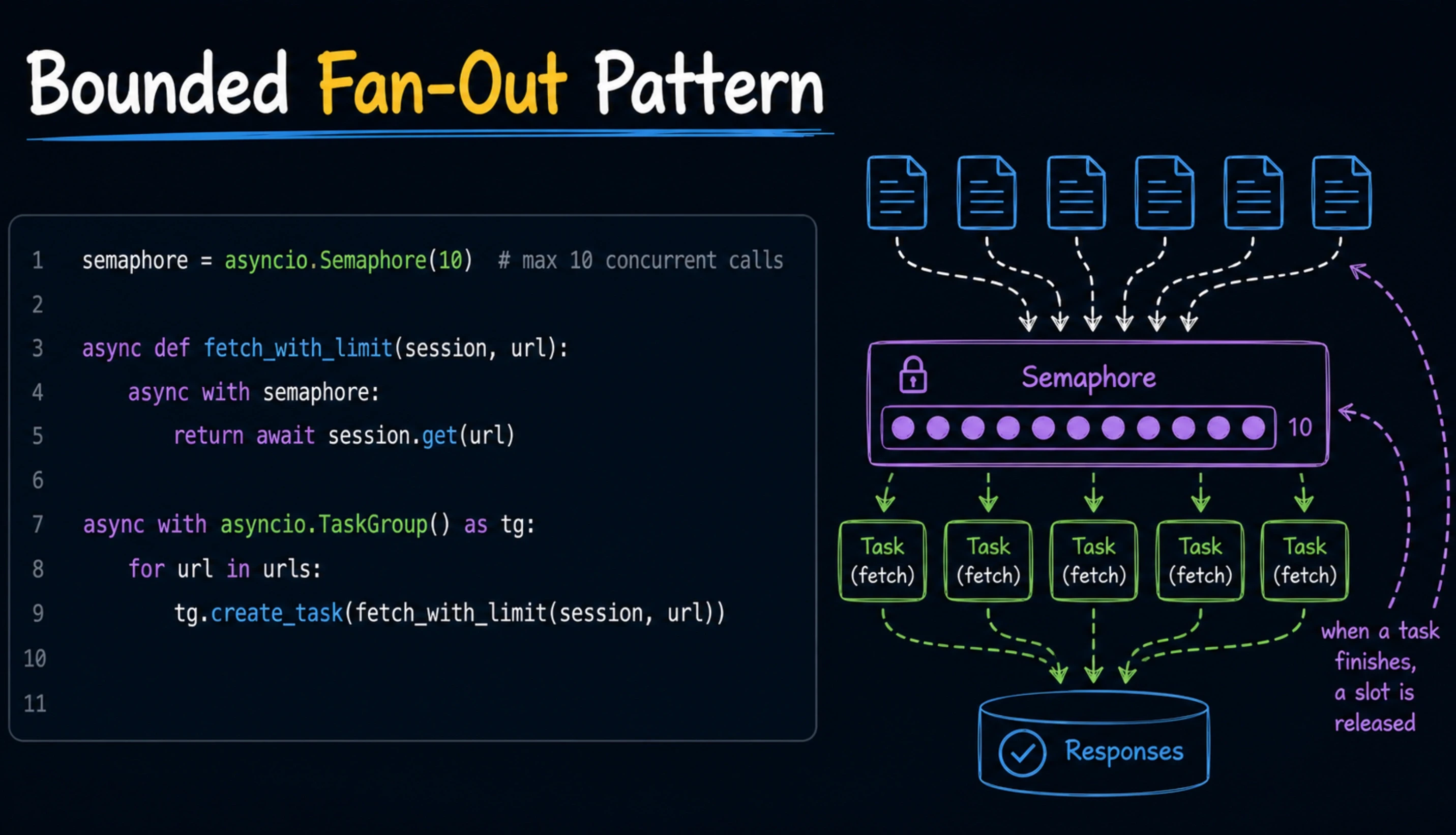

Pattern 5: Cap Concurrency With Semaphores

Unbounded concurrency is a denial-of-service vector waiting to happen. If your endpoint fans out to 100 downstream API calls in parallel for one user request, ten users can saturate the downstream service. The fix is asyncio.Semaphore, which caps how many tasks can run concurrently.

Bounded Fan-Out Pattern

semaphore = asyncio.Semaphore(10) # max 10 concurrent calls

async def fetch_with_limit(session, url):

async with semaphore:

return await session.get(url)

async with asyncio.TaskGroup() as tg:

for url in urls:

tg.create_task(fetch_with_limit(session, url))

This pattern is essential for any endpoint that fans out to external APIs, database queries, or microservices. The right concurrency limit depends on the downstream service's capacity, your own RPS budget, and the typical response time. Start at 10 and tune from there based on observed behaviour.

Pattern 6: Always Set Timeouts on Every External Call

A coroutine awaiting a slow downstream service holds the event loop's attention indefinitely if the downstream service hangs. Timeouts are not optional in production async code. Every external call must have an explicit timeout, and every endpoint must have a worst-case latency budget.

Two Timeout Patterns That Actually Work

# Single operation timeout (Python 3.4+)

try:

result = await asyncio.wait_for(fetch(), timeout=5.0)

except asyncio.TimeoutError:

handle_timeout()

# Block-level timeout (Python 3.11+)

async with asyncio.timeout(30.0):

result = await long_operation()Pattern 7: Centralise Async Clients at Application Startup

Creating a new HTTP client or database connection per request is wasteful and opens you up to connection exhaustion under load. Production async architecture creates clients once at application startup, reuses them across requests, and shuts them down cleanly at application exit.

FastAPI Lifespan Pattern

from contextlib import asynccontextmanager

@asynccontextmanager

async def lifespan(app: FastAPI):

# Startup: create reusable clients

app.state.http = httpx.AsyncClient()

app.state.db = await asyncpg.create_pool(DATABASE_URL)

yield

# Shutdown: close cleanly

await app.state.http.aclose()

await app.state.db.close()

app = FastAPI(lifespan=lifespan)Centralised async clients sit inside the broader architectural patterns covered in the guide on Python development architecture and frameworks, which walks through how dependency injection, lifecycle management, and connection pooling fit together in a complete async stack.

Pattern 8: Observability for Async Systems Specifically

Generic application monitoring is not enough for async systems. You need event loop specific metrics that surface blocking patterns before users feel them. Without these metrics, async incidents look like 'random latency spikes' until you instrument the loop itself.

Five Metrics Every Async Production Stack Needs

Event loop latency. How long the loop sits idle vs how long it spends scheduling. Healthy loops have idle time. Saturated loops do not.

Task count and lifetime. How many tasks are alive at any moment, and how long they live. Sudden spikes in long-lived tasks indicate leaks.

Per-endpoint p50, p95, p99 latency. p99 catches the tail latency that p50 hides. p99 is what users complain about.

Async-aware tracing. OpenTelemetry with async instrumentation traces requests across coroutine boundaries. Without async-aware tracing, distributed flows are invisible.

Worker queue depth. Celery flower, Prometheus metrics on RQ, or queue-specific dashboards. A growing queue is the early warning that worker capacity is undersized.

Anti-Patterns That Quietly Destroy Async Performance

Some async code looks correct in review and fails in production. The patterns below are the ones experienced reviewers catch and junior reviewers miss.

time.sleep() inside async def. Blocks the entire loop. Always use asyncio.sleep() in async code. The same applies to any other sync 'wait' function.

Sync ORM call inside an async endpoint. Calling sync SQLAlchemy or Django ORM inside async def looks like async code, blocks the loop, and reduces throughput by 80% or more silently.

Unhandled exceptions in fire-and-forget tasks. asyncio.create_task() without await or storage causes silent failures. Use TaskGroup or store tasks for proper supervision.

Synchronous JSON serialization on hot endpoints. Default json module on multi-MB payloads adds tens of milliseconds. Switch to orjson or msgspec for 30-50% serialization improvement.

Blocking startup operations. Long synchronous I/O during app startup (large config loads, schema migrations) delays the event loop. Move these to async or to a separate startup hook.

These patterns are common contributors to expensive Python rebuilds and architecture rewrites, mapping closely to the warning signs covered in the guide on Python development expensive red flags.

Production Deployment Checklist

Async architecture is only as good as the deployment that runs it. The configuration below is the production-grade default for FastAPI plus Uvicorn plus Gunicorn in 2026.

Table : Production Async Deployment Checklist

Layer | Production Default | Why |

|---|---|---|

Event loop | uvloop | 2-4x throughput vs default asyncio |

HTTP parser | httptools | Cython-compiled, faster header parsing |

ASGI server | Uvicorn | ASGI native, designed for asyncio |

Process manager | Gunicorn | Multi-worker, fault isolation |

Worker count | (2 x CPU cores) + 1 | Standard for I/O bound async services |

JSON serializer | orjson or msgspec | 30-50% faster than default json |

Database driver | asyncpg / motor / aiomysql | Native async, pool-aware |

Outbound HTTP | httpx async client | Async-first, modern API |

For the full 24-month ownership cost picture across an async Python backend, including infrastructure, observability, and engineering capacity, the analysis on ownership cost of Python projects walks through the operational economics in detail.

What to Look for When Hiring for Async Python Roles

Async expertise is one of the most consistently misjudged skills in Python hiring. Many engineers list asyncio on their resume and have not shipped a production async system. The interview questions below separate real experience from surface familiarity.

Can they explain why time.sleep blocks but asyncio.sleep does not? If the answer is fuzzy, they have not debugged a production loop blocking incident.

Do they know the difference between gather and TaskGroup? Modern async code uses TaskGroup. Engineers stuck in the asyncio.gather era are usually pre-Python 3.11 in their thinking.

Have they used uvloop in production? Direct experience tuning event loops separates senior engineers from competent juniors.

Can they describe a real async incident they debugged? Specific stories about loop saturation, leaked tasks, or sync calls in async paths predict real production capability.

For the broader hiring framework that surrounds these technical signals, especially when working with offshore or contracted async Python teams, the guide on red flags when outsourcing Python development covers how to spot weak technical claims before they become delivery problems.

How Acquaint Softtech Builds Async-Native Python APIs

Acquaint Softtech is a Python development and IT staff augmentation company based in Ahmedabad, India, with 1,300+ software projects delivered globally across healthcare, FinTech, SaaS, EdTech, and enterprise platforms. Our async API engagements follow the architectural framework described in the complete guide to hiring Python developers, and our senior engineers have shipped FastAPI systems handling well into the tens of thousands of RPS in production without event loop blocking incidents.

Senior Python engineers with asyncio production depth. Hands-on with FastAPI, Uvicorn, uvloop tuning, async PostgreSQL with asyncpg, Redis cluster integration, and Celery worker pipelines.

Async-first observability experience. Event loop instrumentation with Prometheus, async-aware tracing with OpenTelemetry, p99 latency dashboards, and Sentry async error capture.

Healthcare and FinTech compliance experience. GDPR-compliant async FastAPI platform delivered for BIANALISI, Italy's largest diagnostics group, processing patient records with audit-grade query logging.

Transparent pricing from $20/hour. Dedicated Python engineering teams from $3,200/month per engineer. Async architecture audits from $5,000.

To bring senior async Python engineers onto your project quickly, you can hire Python developers with profiles shared in 24 hours and a defined onboarding plan within 48.

The Bottom Line

Python async architecture is not a feature you turn on. It is a discipline you maintain in every code review. Use async-native drivers everywhere I/O happens. Run uvloop in production. Offload CPU and legacy sync work properly. Use TaskGroup for structured concurrency. Cap fan-out with semaphores. Set timeouts on every external call. Centralise clients at startup. Instrument the event loop, not just the application.

Get those eight patterns right and Python async holds up at any scale your business will reach in 2026. Get them wrong and your API will look fast in staging and break in production, exactly as 42% of teams reporting six-figure incidents have discovered. The framework cannot save you from a blocking call. Discipline can. Build the discipline before traffic finds the gap.

Async API Latency Spikes Eating Your On-Call Time?

Book a free 30-minute async architecture review. We will look at your event loop instrumentation, identify the three most likely sources of blocking, and give you a written remediation plan with concrete code-level fixes. No sales pitch. Just senior engineers who have debugged this exact pattern in production.

Frequently Asked Questions

-

Does using async def automatically make my Python endpoint concurrent?

No. async def only declares that the function returns a coroutine. If the coroutine internally calls a blocking library, the entire event loop stalls. True concurrency requires that every I/O call inside the coroutine uses an async-native driver and that all CPU-heavy work is offloaded to a thread or process pool. The keyword is the start, not the destination.

-

What is the difference between asyncio.gather and asyncio.TaskGroup?

Both run multiple coroutines concurrently. asyncio.gather predates Python 3.11 and has subtle failure modes: when one task fails, sibling tasks continue running. TaskGroup, introduced in Python 3.11, provides structured concurrency with automatic cancellation: if any task fails, all sibling tasks are cancelled cleanly. For application code in 2026, TaskGroup is the default. Use gather only when supporting older Python versions.

-

Should I use uvloop in production?

Yes, on any production async API at meaningful scale. uvloop replaces Python's default asyncio loop with a Cython-compiled implementation based on libuv (the same library that powers Node.js) and delivers 2 to 4x throughput improvement under high concurrency. The configuration change is one command line flag. The throughput gain is meaningful at any production scale.

-

How do I detect if my async API is being slowed by a blocking call?

Three signals: rising p99 latency despite low CPU usage, requests queueing under modest load, and intermittent timeouts that are hard to reproduce in staging. Instrument event loop latency with Prometheus, use prometheus_fastapi_instrumentator for FastAPI specifically, and look for moments when the loop sits busy without I/O. Most blocking calls show up as a 'CPU is fine but everything is slow' pattern.

-

When should I use asyncio.to_thread vs Celery for offloading?

asyncio.to_thread is the right tool for occasional, short-duration blocking calls (legacy SDKs, brief CPU work under a few hundred milliseconds). Celery, RQ, or Dramatiq are the right tools for any work that takes longer, that needs retry logic, that should survive a restart, or that runs on a schedule. The dividing line is roughly 200 to 500 milliseconds. Below that, offload inline. Above it, send to a queue.

-

Do I need timeouts on every async call?

Yes, every external call without exception. A coroutine awaiting a hung downstream service holds the event loop's attention indefinitely. Use asyncio.wait_for for individual operations and asyncio.timeout (Python 3.11+) for blocks of code. Set timeouts based on the downstream service's typical p99 plus a buffer, not on optimism about how fast it will respond.

-

Is async always better than sync for Python APIs?

No, only for I/O-bound workloads. If your endpoint mainly does CPU work (encryption, image processing, ML inference) without waiting on external systems, sync code with multiprocessing is often faster and simpler than async. Async wins when you have many concurrent requests that spend most of their time waiting on databases, external APIs, or file I/O. Pick the right tool for the workload.

Table of Contents

Get Started with Acquaint Softtech

- 13+ Years Delivering Software Excellence

- 1300+ Projects Delivered With Precision

- Official Laravel & Laravel News Partner

- Official Statamic Partner

Related Blog

When Is Python Development Too Expensive? Pricing Red Flags That Signal a Bad Vendor

Not all expensive Python development is justified. This guide identifies the exact pricing red flags that signal a bad vendor, with real benchmarks, warning signs, and what fair Python pricing actually looks like in 2026.

Acquaint Softtech

March 26, 2026How to Hire Python Developers Without Getting Burned: A Practical Checklist

Avoid costly hiring mistakes with this practical checklist on how to hire Python developers in 2026. Compare rates, vetting steps, engagement models, red flags, and more.

Acquaint Softtech

March 30, 2026Total Cost of Ownership in Python Development Projects: The Full Financial Picture

The build cost is just the beginning. This guide breaks down the complete TCO of Python development projects across every lifecycle phase, with real benchmarks, a calculation framework, and 2026 data.

Acquaint Softtech

March 23, 2026India (Head Office)

203/204, Shapath-II, Near Silver Leaf Hotel, Opp. Rajpath Club, SG Highway, Ahmedabad-380054, Gujarat

USA

7838 Camino Cielo St, Highland, CA 92346

UK

The Powerhouse, 21 Woodthorpe Road, Ashford, England, TW15 2RP

New Zealand

42 Exler Place, Avondale, Auckland 0600, New Zealand

Canada

141 Skyview Bay NE , Calgary, Alberta, T3N 2K6

Your Project. Our Expertise. Let’s Connect.

Get in touch with our team to discuss your goals and start your journey with vetted developers in 48 hours.