How Learning Management Systems Work

An LMS is not a video library with a login screen. It is a structured system that owns enrollment, progress, assessment, and certification in one traceable architecture. Teams that treat it as a file folder spend 12 months rebuilding what should have been designed correctly in week one. This guide shows exactly how each layer works, and what it actually costs to build.

Manish Patel

As Head of Tech and Client Success at Acquaint Softtech, a software product development company for online learning platforms with over 1,300+ projects delivered across 13 years, I have watched more than 40 EdTech teams underestimate what a Learning Management System (LMS) actually does, and pay for that assumption six months into a build.

Most teams believe an LMS is a course hosting platform with user accounts and a progress bar. It is not. When that assumption drives architecture, the system breaks at the first point of real institutional pressure: a cohort of 5,000 learners attempting a proctored assessment during a live examination window, with compliance records that must be audit-ready.

This article explains precisely how the three core layers of an LMS work: course delivery, progress tracking, and assessment architecture, using the same technical framing we apply during our product discovery workshop for an LMS build before any code is written. For the wider EdTech platform context, see our complete guide to EdTech software development in 2026.

The Problem → Agitation → Solution Problem: EdTech teams mistake a course hosting platform for a Learning Management System. The two share a UI. They do not share an architecture. Agitation: The gap shows up at scale. When 10,000 learners attempt a compliance exam simultaneously, or when an accreditation audit demands learner records going back 3 years, a course hosting platform fails. An LMS does not. Solution: Understand the three layers, course delivery, progress tracking, and assessment, as distinct engineering concerns, and build each one correctly from the first sprint. |

- Founders and CTOs deciding between a custom LMS and existing platforms.

- Product teams are designing course delivery, tracking, and assessment systems.

- Education leaders are replacing or upgrading legacy LMS solutions.

- Technical leads building EdTech systems and implementing SCORM, tracking, and grading logic.

What an LMS Actually Is and What It Is Not

An LMS is not a course library. It is a learner, course, and assessment accounting structure that maintains a persistent record of who enrolled in what, what they completed, how they scored, and what credential they earned. The moment a learner's record needs to survive across devices, sessions, content types, and regulatory audits, you need an LMS.

The industry uses the term loosely, which causes expensive scoping mistakes. A platform that hosts video courses and logs play-through percentage is not an LMS. A platform that owns enrollment state, assessment scoring, grading logic, instructor assignment, certificate issuance, and audit-ready compliance records is an LMS. For teams evaluating existing platforms before committing to a custom build, reviewing top LMS vendors is a practical first step.

The four accountabilities that define an LMS

Enrollment and access control. The LMS assigns learners to courses, cohorts, or learning paths. It enforces access by role, enrollment date, prerequisite completion, or payment status. The administrator sets rules. The learner sees only what they are authorized to see. This is a permissions system, not a folder structure.

Content delivery and sequencing. The LMS does not merely host content. It enforces a sequence: the learner cannot access Module 3 until Module 2 is marked complete. It delivers content in formats the learner's device can render, SCORM (Sharable Content Object Reference Model) packages, xAPI (Experience API, also known as Tin Can API) statement streams, PDF documents, adaptive HTML5 modules, streamed video, or content created using an AI video generator, and it tracks what was consumed.

Progress persistence across sessions. The LMS stores every meaningful learner action: video pause point, quiz attempt number, time spent per section, last accessed slide, and current completion percentage. When the learner closes the browser on Wednesday and returns on Friday from a different device, the LMS resumes from the correct state. A CMS (Content Management System) does not do this.

Assessment ownership. The LMS administers, grades, and permanently stores assessment results. The gradebook lives inside the LMS. The certificate is generated by the LMS when the defined completion criteria are met. These records are the institutional proof of learning, and in regulated industries, they are the compliance record.

The Diagnostic Test

If your current platform cannot answer these four questions accurately, who enrolled and when? What did they complete and how long did it take? What did they score on each assessment attempt? What certificates have been issued and to whom? You do not have an LMS. You have a video hosting platform with a login screen.

The Three-Layer Architecture Every LMS Needs

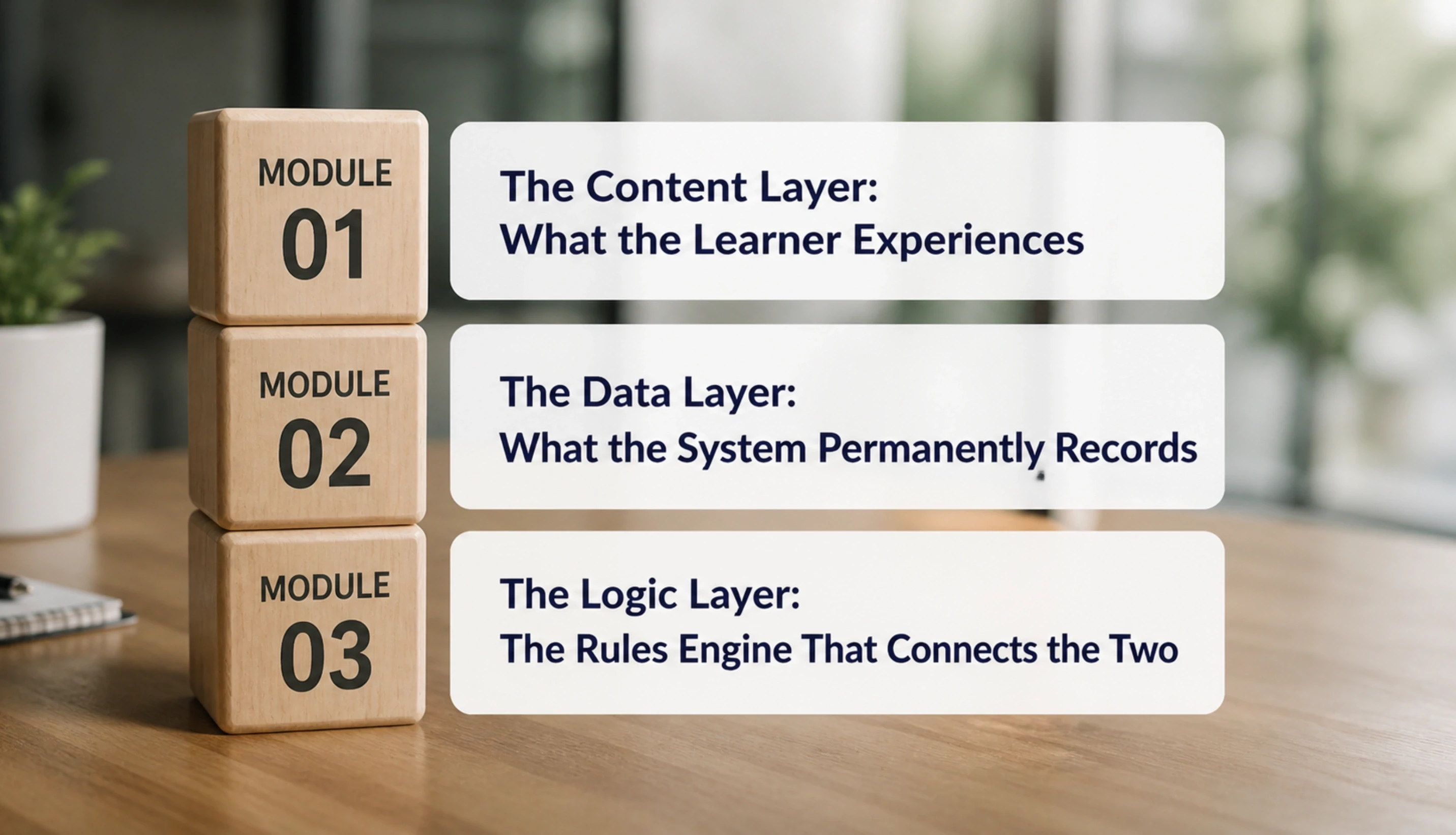

Understanding how learning management systems work at an engineering level means separating three distinct layers. They interact constantly, but must be designed as independent concerns. Teams that collapse them into one monolithic application produce systems that are fast to demo and impossible to maintain at scale.

MODULE 01 | The Content Layer: What the Learner Experiences What it does: This is the surface the learner interacts with: the course player, the video renderer, the quiz interface, the discussion forum, the downloadable resource, and the navigation controls. How it works: The content layer must be responsive, WCAG (Web Content Accessibility Guidelines) 2.1 AA compliant, and capable of rendering multiple content types without a full page reload. A React or Vue.js single-page application handles this correctly. The critical engineering requirement: the content layer makes no decisions about completion or grading. Its only job is to fire events upward and receive state instructions downward. Engineering constraint: If the content layer contains grading logic, the system becomes impossible to audit. Grading logic belongs exclusively in the logic layer. |

MODULE 02 | The Data Layer: What the System Permanently Records What it does: This is the database structure beneath the LMS: learner profiles, course objects, enrollment records, content completion events, assessment attempts, scores, instructor assignments, certificate records, and audit logs. How it works: The data layer must be normalized enough to support accurate reporting and flexible enough to handle concurrent writes during peak enrollment windows. PostgreSQL with Redis caching handles most LMS traffic patterns at up to 50,000 concurrent learners. The schema must include an append-only audit log for compliance-critical records: attendance, assessment scores, and certificate issuance events cannot be overwritten. Engineering constraint: Storing only a single 'completion: true' boolean per learner per course is the most common data layer mistake. It cannot support any compliance audit, any grade dispute, or any meaningful analytics. |

MODULE 03 | The Logic Layer: The Rules Engine That Connects the Two What it does: This is the server-side rules engine: prerequisite enforcement, enrollment gating, grading formulas, adaptive path branching, auto-certificate triggers, SCORM completion criteria evaluation, and xAPI statement processing. How it works: The logic layer receives events from the content layer, evaluates them against defined rules, and writes results to the data layer. Every SCORM launch, every quiz submission, and every video completion event passes through this cycle. The logic layer is the most frequently underbuilt part of a custom LMS. Teams build a beautiful course player and then realize there is no coherent system for evaluating whether completion criteria have actually been met. Engineering constraint: The logic layer must be stateless and independently testable. A completion rule that cannot be unit-tested in isolation from the UI will produce inconsistent results at scale. |

The interaction pattern is fixed: the learner acts in the content layer, the logic layer evaluates the action against defined rules, and the data layer records the result. Every feature in the LMS — gamification, adaptive paths, cohort management, certificate generation — is an extension of this three-layer cycle.

Want to Know How This Architecture Applies to Your Platform?

Acquaint Softtech reviews your current spec or concept and returns a team structure, architecture outline, and cost estimate within 48 hours. You interview the engineers before any engagement starts. No commitment until you are confident in the team.

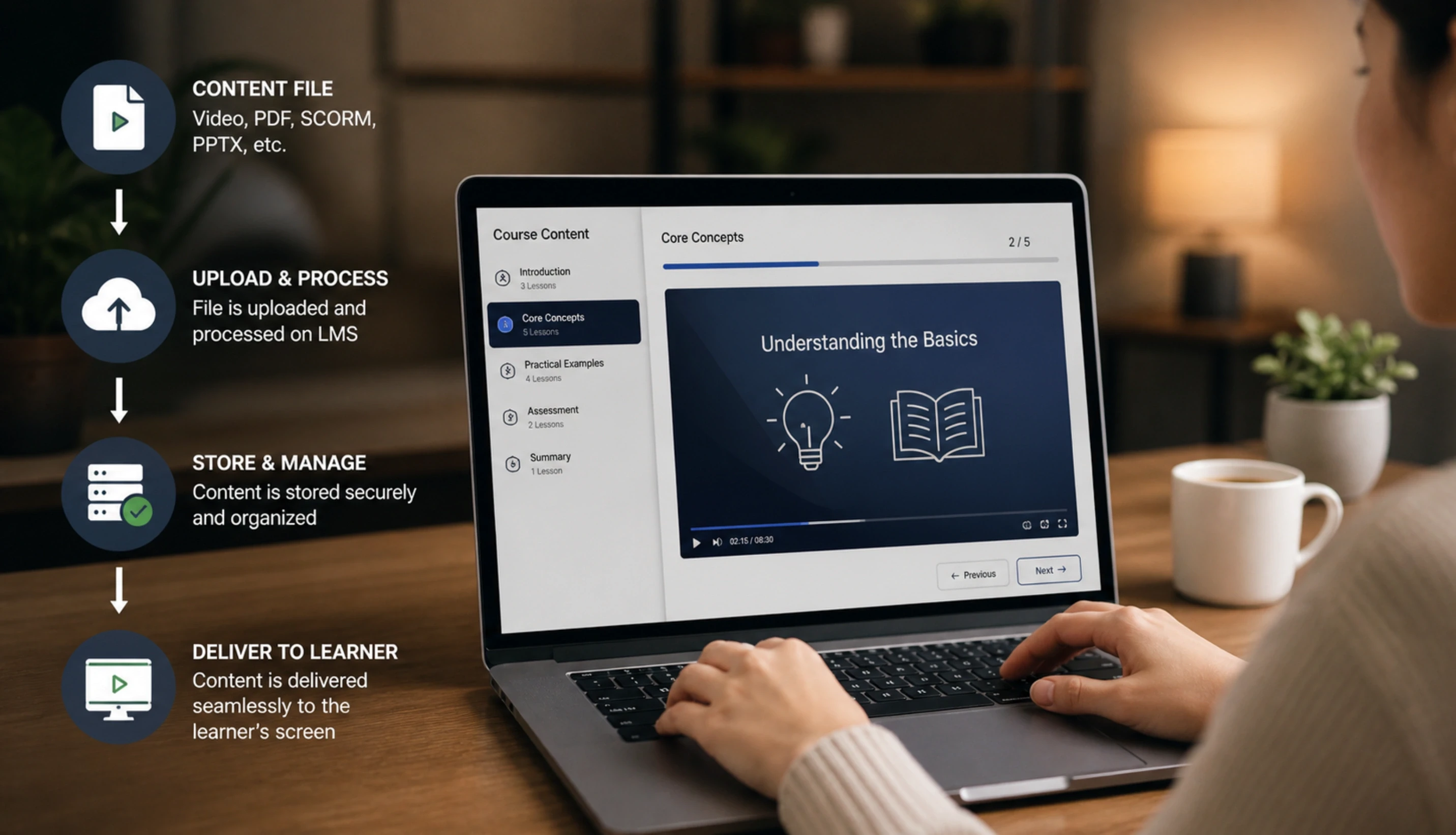

Course Delivery: From Content File to Learner Screen

Course delivery is the engineering path from a content file on a server to a learner interacting with it correctly on any device. The decisions made in this layer determine SCORM compliance, mobile experience, offline availability, and load performance under concurrent access.

Stage 1: Content ingestion and SCORM package validation

Course content arrives from an authoring tool, Articulate Storyline, Adobe Captivate, iSpring, or a custom course builder, packaged as a SCORM 1.2 or SCORM 2004 ZIP file, or as xAPI content targeting a Learning Record Store (LRS). The LMS must unzip the package, validate the imsmanifest.xml against the SCORM specification, and store the course structure in its database before any learner accesses the content. Skipping validation is how malformed packages produce broken learner experiences that only surface at the first cohort launch.

Stage 2: The SCORM runtime, where most LMS implementations break

When a learner launches a SCORM module, the LMS loads it in a sandboxed iFrame and exposes a standard JavaScript API. The content calls functions like LMSInitialize() to start a tracking session, LMSSetValue() to record completion status or quiz scores, and LMSCommit() to save data to the server. If the LMS does not implement these API calls correctly — because of an iFrame origin restriction, a race condition on session load, or a timeout during commit — the learner's completion data is silently lost. This is the most common failure mode in poorly built LMS platforms, and it is invisible until a compliance audit demands proof of completion that the system cannot produce.

Stage 3: Adaptive bitrate video streaming

Video in an LMS is not a static file download. A 90-minute training video served as a single MP4 consumes unnecessary bandwidth and fails on mobile connections. A production LMS uses HLS (HTTP Live Streaming) or DASH (Dynamic Adaptive Streaming over HTTP) to serve video in small chunks at a quality level matched to the learner's current network speed. This requires a video transcoding pipeline, a CDN (Content Delivery Network) layer, and a player that supports adaptive quality switching without interrupting playback.

Stage 4: Offline access and sync for mobile learners

Corporate training platforms and K-12 mobile apps frequently require offline content access: the learner downloads a module while connected, completes it offline, and the LMS syncs progress when connectivity returns. This requires a local state store in the mobile app, a conflict resolution protocol, and a bulk-event sync API. The React Native app development for an EdTech app approach handles this correctly because it provides direct access to device storage APIs that a mobile browser cannot reliably use.

Progress Tracking: The Data Model Behind Learner Completion

Progress tracking is the layer most LMS teams get wrong. They record 'last page viewed' and call it completion. They record 'time on platform' and call it engagement. These are not the same things as completion and engagement. Accurate progress tracking requires a precise data model and explicit business rules for what counts as done.

The five event categories that must be tracked

Completion events: a defined criterion was met, watched 80% of the video, scored 70%+ on the quiz, and submitted an assignment. Store the raw event, the evaluation result, the timestamp, and the device/session context. A boolean 'completed: true' is not enough for audit or analytics.

Active time tracking: not elapsed wall-clock time, but time the learner was actively engaged. The LMS uses 30-second heartbeat signals from the browser to measure active session time. SCORM stores this in cmi.core.session_time. Compliance training in healthcare, finance, and aviation uses time-on-task as a regulatory metric.

Assessment attempt records: each attempt is stored individually, including the attempt number, start time, end time, score, pass/fail result, and whether it was a practice or a graded attempt. Compliance platforms enforce maximum attempt limits. Adaptive systems use attempt history to decide what content to serve next.

Path navigation: for branching or adaptive courses, which path did the learner take, which branches did they skip, and at what point did drop-off occur? This data drives the curriculum analytics the instructor uses to improve content.

Competency mapping: enterprise platforms map completion events to a competency taxonomy. Completing a module marks one or more competency nodes in the learner's profile. The administrator queries which learners have met which competencies and where skill gaps remain across a team or department.

The Compliance Record Rule

Progress records for compliance training, healthcare, financial services, aviation, and corporate legal must be stored in an append-only audit log. Overwriting or deleting a progress record is a compliance violation regardless of the reason. Design the schema with append-only constraints before the first learner record is written, not after the first audit request arrives.

The eLearning platform case study Acquaint Softtech completed for a multi-tenant online learning provider demonstrates this data model at scale: 200+ courses across multiple client organizations, with progress records that needed to be accurate, isolated by tenant, and exportable for institutional audits. See the full eLearning platform development case study for the architecture details.

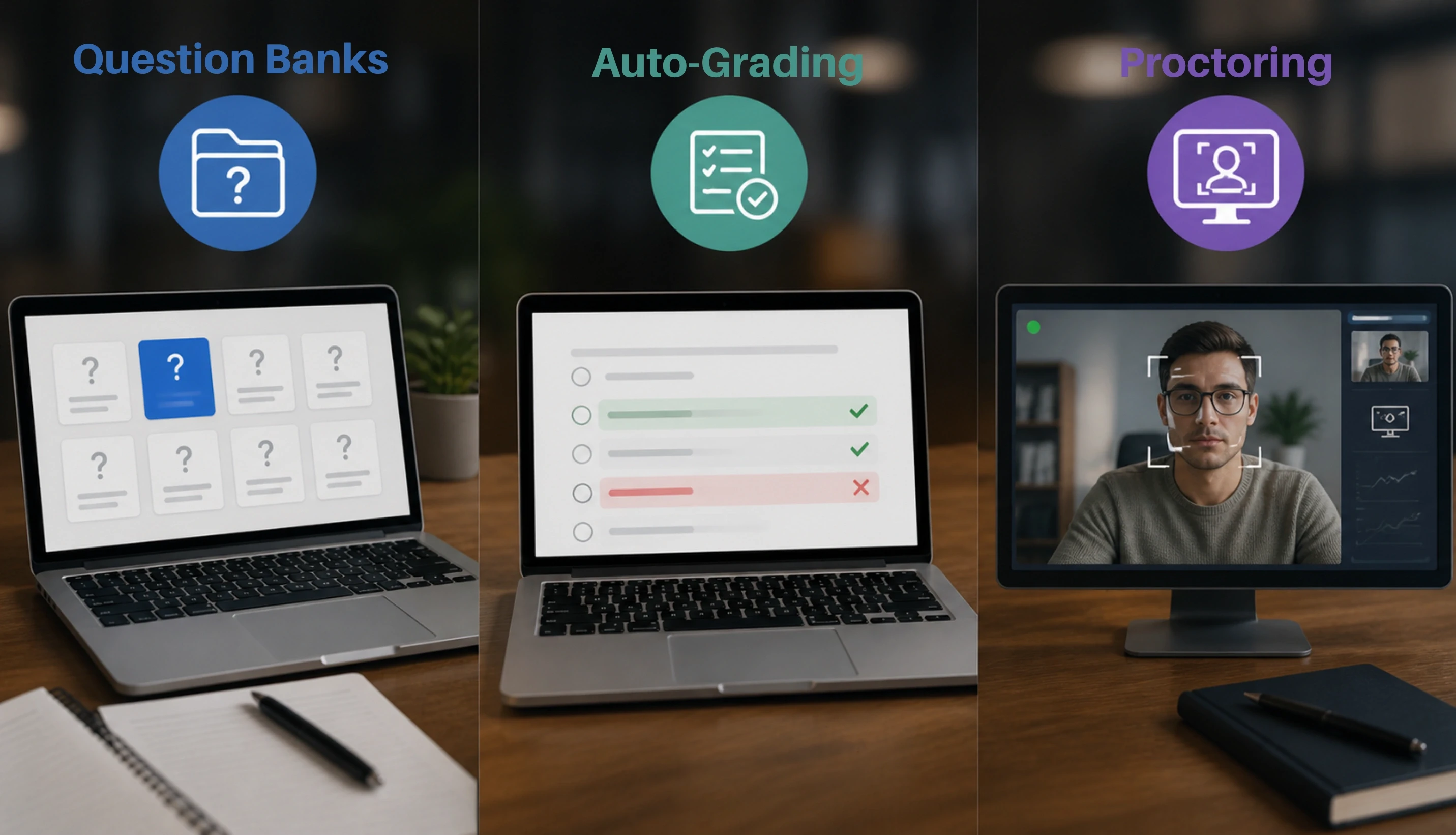

Assessment Architecture: Question Banks, Auto-Grading, and Proctoring

The assessment layer is where an LMS earns its credibility as an institutional accountability system. A platform that cannot reliably administer, grade, and permanently store assessment results is not a system that universities, corporate L&D teams, or regulated industries will trust with their learner records.

Question bank architecture

A question bank is not a list of questions. It is a structured database where each question carries: question type (multiple choice, true/false, fill-in-the-blank, drag-and-drop, essay, hotspot image), one or more correct answers, a point value, a difficulty rating, a topic tag, and a usage history. The LMS draws questions from the bank to build assessments - either a fixed selection or a randomized draw filtered by topic and difficulty. For a platform serving 50,000 active learners, a question bank needs 500 to 2,000 questions per subject area to prevent learners from memorizing the sequence.

Auto-grading logic

Objective question types, multiple choice, matching, ordering, and numeric entry, grade automatically and immediately. The grading engine must handle: weighted questions (some worth more points than others), partial credit (fill-in-the-blank may accept multiple correct phrasings), and pass/fail thresholds that trigger downstream actions, remedial content assignment, certificate issuance, instructor notification, or enrollment in a retake cohort.

Essay and code-submission questions require either human grading, rubric-based scoring tools, or AI-assisted feedback. The LMS must hold the assessment in a 'pending human review' state and notify the assigned instructor or grader without losing the submission.

Adaptive assessment engine

An adaptive assessment engine adjusts question difficulty based on the learner's real-time performance using Item Response Theory (IRT) models. Correct answers trigger harder questions. Incorrect answers trigger easier ones. The result is a more accurate measurement of ability in fewer questions. This is where the AI development services for the adaptive learning layer integrate directly into the assessment architecture. The question bank must be tagged with calibrated difficulty parameters before adaptive assessment is possible.

Remote proctoring

A proctoring layer monitors learner behaviour during high-stakes assessments: webcam video, screen activity, audio, browser tab switches, copy-paste actions, and external device events. AI-based proctoring flags anomalies, such as looking away from the screen, unusual keystroke patterns, and a second person visible in the webcam feed for human review. Proctoring data is sensitive and regulated: it must be encrypted at rest, access-controlled to authorized reviewers, and purged according to the institution's data retention policy. FERPA (Family Educational Rights and Privacy Act) and GDPR (General Data Protection Regulation) both impose specific requirements on how proctoring records are stored and who may access them.

LTI and QTI compliance

LTI (Learning Tools Interoperability) is the protocol for connecting external assessment tools, Turnitin, ProctorU, and Respondus to an LMS without a separate login. QTI (Question and Test Interoperability) is the format for importing and exporting question banks between systems. A custom LMS that supports both standards plugs into the broader EdTech ecosystem without custom integrations for every tool. The scalable backend development services for an LMS must expose LTI endpoints and QTI parsers as part of the core architecture, not as an afterthought.

Building an LMS Assessment Layer? Here Is What the Engagement Looks Like.

Acquaint Softtech provides a dedicated software development team for LMS builds covering question bank design, auto-grading logic, LTI integration, and proctoring system architecture. Team profiles and a cost estimate will be returned within 48 hours. Every engineer is interviewable before commitment.

LMS Development Cost in 2026: Three Engagement Tiers

LMS development cost depends on four variables: learner scale target, number of integrations (Stripe, Zoom, BigBlueButton, SSO, SIS, HRIS), compliance requirements (FERPA, COPPA, GDPR), and whether the platform is single-tenant or multi-tenant. The table below shows the cost across three engagement tiers based on Acquaint Softtech's delivery operations.

Summary before the table: a small LMS serving up to 5,000 learners with SCORM delivery, a quiz engine, and a basic progress dashboard costs $8,000 to $13,000 per month with a team of 2 to 3 engineers. A mid-scale platform serving 5,000 to 50,000 learners with adaptive content, live session integration, advanced analytics, and multi-tenant architecture runs $13,000 to $22,000 per month with 4 to 6 engineers. A large platform with 100,000+ learners, proctoring, AI grading, full SCORM/xAPI/LTI compliance, and a React Native mobile app runs $22,000 to $35,000 per month with 7 to 10 engineers.

These figures are achievable primarily because the delivery model relies on the ability to hire offshore developers, which reduces blended team rates significantly compared to onshore-only staffing without compromising delivery quality.

Engagement Size | Learner Scale | Monthly Rate (Acquaint) | Equivalent In-House Cost | Annual Saving | Team Size |

Small LMS | Up to 5,000 learners | $8,000 to $13,000 | $25,000 to $35,000 | $204,000 to $264,000 | 2 to 3 engineers |

Mid-Scale LMS | 5,000 to 50,000 learners | $13,000 to $22,000 | $40,000 to $65,000 | $324,000 to $516,000 | 4 to 6 engineers |

Large LMS | 100,000+ learners | $22,000 to $35,000 | $80,000 to $120,000 | $696,000 to $1,020,000 | 7 to 10 engineers |

What the monthly rate includes at Acquaint Softtech:

Full-stack engineering: Laravel or Node.js backend API, React or Vue.js learner portal, and admin dashboard. Organizations looking to staff this layer can explore dedicated Laravel expertise for backend API development.

SCORM 1.2 and SCORM 2004 runtime implementation; xAPI statement processing and LRS integration where required

Assessment engine: question bank schema, auto-grading logic, attempt tracking, and certificate generation

Sprint-based delivery with weekly demos and client access to project boards

QA (Quality Assurance) on every release, including cross-browser and mobile device testing

NDA (Non-Disclosure Agreement) and IP (Intellectual Property) assignment to the client from day one

The largest variable in cost is integration scope. A Zoom integration for live sessions adds 2 to 4 weeks of engineering. A full SSO (Single Sign-On) implementation with an institutional identity provider adds 1 to 3 weeks. A proctoring integration with a third-party vendor adds 3 to 6 weeks. For teams evaluating the software product engineering services for an LMS, scope these integrations explicitly before the engagement starts, not as change requests mid-sprint.

Right Way vs Common Mistake: Architecture Decisions That Determine Success

These are the six architecture decisions Acquaint Softtech reviews in every LMS scoping engagement. Each one is the point where teams either build correctly or spend the next 12 months paying for the mistake.

✓ RIGHT WAY | ✗ COMMON MISTAKE |

Expose the SCORM JavaScript API through a properly scoped iFrame with explicit API_1484_11 or API object on the parent window, tested against the ADL SCORM Run-Time Reference Implementation before launch. | Assume the SCORM API 'just works' because the authoring tool generates compliant content. The LMS is responsible for the API implementation, not the content tool. A missing or incorrectly scoped API object silently loses every completion record. |

Store each assessment attempt as an independent database record with attempt number, start time, end time, per-question responses, and final score. Append-only, never overwritten. | Store only the highest score or the most recent score per learner per assessment. This satisfies a completion dashboard but fails every audit that asks for attempt history, timing analysis, or score progression evidence. |

Use 30-second heartbeat signals from the content layer to measure active engagement time. Record heartbeat gaps as unverified intervals in the progress record, not as absent periods. | Calculate time-on-task as (session end timestamp minus session start timestamp). A learner who opens a module and leaves for lunch shows 45 minutes of 'learning time'. This data is legally insufficient for compliance training records. |

Build the progress tracking data model with three linked tables minimum: learner table, enrollment table, and completion event table. Join these at query time for all reporting. | Store a single 'percentage_complete' field per learner per course and update it on each event. This cannot reconstruct what the learner did, when they did it, or whether the completion was legitimate. |

Implement multi-tenant data isolation at the database schema level, separate schema per tenant, or row-level security on a shared schema, so that one tenant's learner records are architecturally inaccessible to another tenant's application queries. | Implement multi-tenant isolation only at the application layer with a tenant_id WHERE clause on every query. A single missing WHERE clause exposes one institution's learner records to another. This is a FERPA and GDPR violation. |

Implement the LTI consumer role from the first sprint if the platform will integrate with external assessment tools. LTI endpoints are not easily retrofitted into an LMS that was not designed for them. | Plan to 'add LTI later' after the core platform is built. LTI requires specific session management and launch URL architecture that conflicts with most standard authentication middleware if not designed in from the start. |

The Governance Reference

For institutions in the United States, FERPA requires that student education records be accurate, accessible to authorized parties, and protected from unauthorized disclosure. For platforms serving learners under 13, COPPA imposes additional consent and data minimization requirements. Both frameworks apply to custom LMS platforms and should be reviewed against the architecture before the first production learner record is written. See the U.S. Department of Education FERPA guidance at studentprivacy.ed.gov and the FTC COPPA guidance at ftc.gov/coppa.

Ready to Scope Your LMS Build? Here Is the 48-Hour Process.

Share your requirements, and Acquaint Softtech returns a team structure, architecture outline, and cost estimate within 48 hours. You interview every engineer before commitment. IP is assigned to you from the first contract. Average team tenure on EdTech accounts: 24+ months.

Frequently Asked Questions

-

What is the difference between an LMS and a content hosting platform?

A Learning Management System owns the full lifecycle of formal learning: enrollment, content delivery, progress tracking, assessment, grading, and certification. A content hosting platform stores and streams course files. A content platform typically does not. For institutions and organizations where learner records have regulatory or compliance significance, the LMS is the system of record. The content platform is not. The U.S. Department of Energy formally defines an LMS as an official training administration and recordkeeping system

-

What are the core modules an LMS must have to function correctly?

A functioning LMS requires six core modules: an enrollment and access control module, a content delivery and SCORM/xAPI runtime module, a progress persistence module, an assessment engine with question bank and grading logic, a reporting and analytics module, and a certificate and credential issuance module.

Optional modules extend this foundation: live session integration, a payment gateway, mobile offline sync, gamification, and adaptive learning paths. The six core modules are not optional. An LMS missing any one of them is an incomplete system that will fail under institutional pressure or compliance review.

-

How does SCORM compliance work in a custom LMS?

SCORM compliance means the LMS supports a standard API that allows SCORM course content to communicate with the platform. It tracks learner progress, completion status, scores, and session data through functions like initialization, data saving, and progress updates.

-

How does progress tracking work at the database level?

LMS progress tracking typically uses linked tables for learners, enrollments, and completion events to record actions like quiz submissions, video activity, and course progress. These records are used to calculate metrics such as completion percentage, active learning time, quiz scores, and learner progress through the course.

-

How long does it take to build a custom LMS?

A minimum viable LMS with SCORM delivery, a quiz engine, a basic progress dashboard, and a certificate module takes 14 to 20 weeks with a team of 4 engineers. This assumes a clear scope, completed content requirements, and no significant third-party integrations. Adding live session integration extends the timeline by 4 to 6 weeks.

-

What tech stack works best for an LMS in 2026?

For an LMS serving up to 50,000 concurrent learners: a Laravel (PHP) backend with a React or Vue.js frontend, PostgreSQL as the primary database, Redis for session caching and heartbeat management, and an S3-compatible object store for content files. This combination is proven, maintainable, and supported by a large pool of senior engineers.

-

What happens to learner data and course content if the development engagement ends?

In a professional EdTech development engagement, the client owns the entire codebase and all learner data from the beginning of the project. The client retains full access to the repository, database, and infrastructure, with no vendor lock in or data stored on the development company’s systems.

-

Is a custom LMS appropriate for fewer than 5,000 learners?

For most organizations with fewer than 5,000 learners, platforms like Moodle, TalentLMS, or LearnDash provide a faster and more cost effective solution than building a custom LMS. A custom platform is usually justified only when there are unique integration, white labeling, compliance, or advanced learning experience requirements that existing platforms cannot support.

Table of Contents

Get Started with Acquaint Softtech

- 13+ Years Delivering Software Excellence

- 1300+ Projects Delivered With Precision

- Official Laravel & Laravel News Partner

- Official Statamic Partner

Related Blog

The Complete Guide to EdTech Software Development in 2026

Complete guide to EdTech software development in 2026. LMS architecture, virtual classroom tech stack, AI tutoring system design, MVP timeline, and cost.

Acquaint Softtech

May 4, 2026The Complete Guide to FinTech Software Development in 2026

Complete guide to fintech software development 2026: all five verticals, compliance architecture, real build sequences, AI capabilities, and fintech development cost, from 1,300+ delivered projects.

Acquaint Softtech

May 6, 2026The Complete Guide to PropTech Software Development in 2026

PropTech is not a real estate website with a login. It is a system of record that manages listings, leases, ledgers, and work orders on a single accountable platform. Here is what it costs, how it is built, and how to pick a partner in 2026.

Acquaint Softtech

May 4, 2026India (Head Office)

203/204, Shapath-II, Near Silver Leaf Hotel, Opp. Rajpath Club, SG Highway, Ahmedabad-380054, Gujarat

USA

7838 Camino Cielo St, Highland, CA 92346

UK

The Powerhouse, 21 Woodthorpe Road, Ashford, England, TW15 2RP

New Zealand

42 Exler Place, Avondale, Auckland 0600, New Zealand

Canada

141 Skyview Bay NE , Calgary, Alberta, T3N 2K6

.webp)